共计 18310 个字符,预计需要花费 46 分钟才能阅读完成。

最近一直在自学 Hadoop,今天花点时间搭建一个开发环境,并整理成文。

首先要了解一下 Hadoop 的运行模式:

单机模式(standalone)

单机模式是 Hadoop 的默认模式。当首次解压 Hadoop 的源码包时,Hadoop 无法了解硬件安装环境,便保守地选择了最小配置。在这种默认模式下所有 3 个 XML 文件均为空。当配置文件为空时,Hadoop 会完全运行在本地。因为不需要与其他节点交互,单机模式就不使用 HDFS,也不加载任何 Hadoop 的守护进程。该模式主要用于开发调试 MapReduce 程序的应用逻辑。

伪分布模式(Pseudo-Distributed Mode)

伪分布模式在“单节点集群”上运行 Hadoop,其中所有的守护进程都运行在同一台机器上。该模式在单机模式之上增加了代码调试功能,允许你检查内存使用情况,HDFS 输入输出,以及其他的守护进程交互。

全分布模式(Fully Distributed Mode)

————————————– 分割线 ————————————–

Ubuntu 13.04 上搭建 Hadoop 环境 http://www.linuxidc.com/Linux/2013-06/86106.htm

Ubuntu 12.10 +Hadoop 1.2.1 版本集群配置 http://www.linuxidc.com/Linux/2013-09/90600.htm

Ubuntu 上搭建 Hadoop 环境(单机模式 + 伪分布模式)http://www.linuxidc.com/Linux/2013-01/77681.htm

Ubuntu 下 Hadoop 环境的配置 http://www.linuxidc.com/Linux/2012-11/74539.htm

单机版搭建 Hadoop 环境图文教程详解 http://www.linuxidc.com/Linux/2012-02/53927.htm

Hadoop LZO 安装教程 http://www.linuxidc.com/Linux/2013-01/78397.htm

Hadoop 集群上使用 Lzo 压缩 http://www.linuxidc.com/Linux/2012-05/60554.htm

————————————– 分割线 ————————————–

1. 安装 JDK,并指定系统默认 jdk 为 Oracle 的版本,默认安装到的目录为 /usr/java/jdk1.7.0_45,下文将其设置为

JAVA_HOME

rpm -ivh jdk-7u51-linux-i586.rpm

vi jdk_install.sh

最后 source jdk_install.sh, 完成配置,查看配置完成的方法 java -version , 一定要确保不是 openJDK。

修改环境变量, 建议是在 etc/profile.d 中重新建立一个文件 shell 脚本来安装 jdk

#This is a shell file for Java Environment Installation

export JAVA_HOME=/usr/java/jdk1.7.0_45

PATH=$JAVA_HOME/bin:$PATH

export JRE_HOME=$JAVA_HOME/jre

export CLASSPATH=./:$JAVA_HOME/lib:$JAVA_HOME/jre/lib

2. 配置 hadoop 环境变量

#set hadoop2.2.0 environment

export HADOOP_HOME=/hadoop/hadoop-2.2.0

export PATH=$PATH:$HADOOP_HOME/bin

export PATH=$PATH:$HADOOP_HOME/sbin

3.hadoop 安装包解压

tar -zcvf hadoop-2.2.0.tar.gz

4. 修改 XML 文件,hadoop 的文件文件在 hadoop 文件目录 etc/hadoop 中,添加下面内容

vim core_site.xml

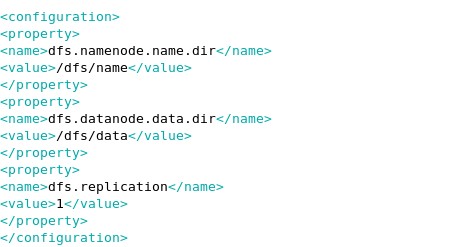

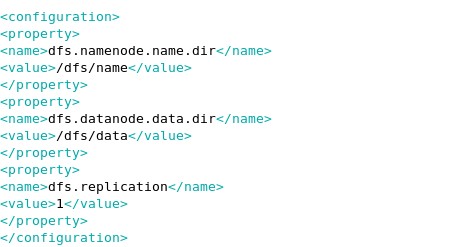

vim hdfs-site.xml

这里是对 namenode 和 daatanode 存储路径的设置。

vim mapred-site.xml

最后在 hadoop-env.sh 这个文件中加入 jdk 的指定,在文件最后加入 export JAVA_HOME=/usr/java/jdk1.7.0_45 也就是你的 jdk 目录

更多详情见请继续阅读下一页的精彩内容:http://www.linuxidc.com/Linux/2014-05/101683p2.htm

4. 启动 Hadoop,进入默认安装目录,首先要对 namenode 进行格式化,进入 bin 目录里

./hdfs namenode -format

14/03/24 15:18:29 INFO namenode.NameNode: STARTUP_MSG:

/************************************************************

STARTUP_MSG: Starting NameNode

STARTUP_MSG: host = hadoop/192.168.47.74

STARTUP_MSG: args = [-format]

STARTUP_MSG: version = 2.2.0

STARTUP_MSG: classpath = /hadoop/hadoop-2.2.0/etc/hadoop:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/stax-api-1.0.1.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/junit-4.8.2.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/commons-codec-1.4.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/jaxb-impl-2.2.3-1.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/jersey-core-1.9.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/log4j-1.2.17.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/paranamer-2.3.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/commons-beanutils-core-1.8.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/mockito-all-1.8.5.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/jersey-json-1.9.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/hadoop-annotations-2.2.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/jets3t-0.6.1.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/slf4j-log4j12-1.7.5.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/commons-beanutils-1.7.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/commons-collections-3.2.1.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/commons-io-2.1.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/jackson-core-asl-1.8.8.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/jsch-0.1.42.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/servlet-api-2.5.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/asm-3.2.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/jasper-compiler-5.5.23.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/snappy-java-1.0.4.1.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/commons-httpclient-3.1.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/jaxb-api-2.2.2.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/netty-3.6.2.Final.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/guava-11.0.2.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/xz-1.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/activation-1.1.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/jackson-jaxrs-1.8.8.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/commons-net-3.1.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/jsr305-1.3.9.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/xmlenc-0.52.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/commons-configuration-1.6.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/commons-digester-1.8.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/jasper-runtime-5.5.23.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/commons-logging-1.1.1.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/jackson-mapper-asl-1.8.8.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/hadoop-auth-2.2.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/jsp-api-2.1.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/jackson-xc-1.8.8.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/protobuf-java-2.5.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/avro-1.7.4.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/commons-math-2.1.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/commons-lang-2.5.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/slf4j-api-1.7.5.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/commons-cli-1.2.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/zookeeper-3.4.5.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/commons-el-1.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/jetty-util-6.1.26.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/commons-compress-1.4.1.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/jettison-1.1.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/jetty-6.1.26.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/lib/jersey-server-1.9.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/hadoop-common-2.2.0-tests.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/hadoop-common-2.2.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/common/hadoop-nfs-2.2.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/hdfs:/hadoop/hadoop-2.2.0/share/hadoop/hdfs/lib/commons-codec-1.4.jar:/hadoop/hadoop-2.2.0/share/hadoop/hdfs/lib/jersey-core-1.9.jar:/hadoop/hadoop-2.2.0/share/hadoop/hdfs/lib/log4j-1.2.17.jar:/hadoop/hadoop-2.2.0/share/hadoop/hdfs/lib/commons-io-2.1.jar:/hadoop/hadoop-2.2.0/share/hadoop/hdfs/lib/jackson-core-asl-1.8.8.jar:/hadoop/hadoop-2.2.0/share/hadoop/hdfs/lib/servlet-api-2.5.jar:/hadoop/hadoop-2.2.0/share/hadoop/hdfs/lib/asm-3.2.jar:/hadoop/hadoop-2.2.0/share/hadoop/hdfs/lib/netty-3.6.2.Final.jar:/hadoop/hadoop-2.2.0/share/hadoop/hdfs/lib/guava-11.0.2.jar:/hadoop/hadoop-2.2.0/share/hadoop/hdfs/lib/commons-daemon-1.0.13.jar:/hadoop/hadoop-2.2.0/share/hadoop/hdfs/lib/jsr305-1.3.9.jar:/hadoop/hadoop-2.2.0/share/hadoop/hdfs/lib/xmlenc-0.52.jar:/hadoop/hadoop-2.2.0/share/hadoop/hdfs/lib/jasper-runtime-5.5.23.jar:/hadoop/hadoop-2.2.0/share/hadoop/hdfs/lib/commons-logging-1.1.1.jar:/hadoop/hadoop-2.2.0/share/hadoop/hdfs/lib/jackson-mapper-asl-1.8.8.jar:/hadoop/hadoop-2.2.0/share/hadoop/hdfs/lib/jsp-api-2.1.jar:/hadoop/hadoop-2.2.0/share/hadoop/hdfs/lib/protobuf-java-2.5.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/hdfs/lib/commons-lang-2.5.jar:/hadoop/hadoop-2.2.0/share/hadoop/hdfs/lib/commons-cli-1.2.jar:/hadoop/hadoop-2.2.0/share/hadoop/hdfs/lib/commons-el-1.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/hdfs/lib/jetty-util-6.1.26.jar:/hadoop/hadoop-2.2.0/share/hadoop/hdfs/lib/jetty-6.1.26.jar:/hadoop/hadoop-2.2.0/share/hadoop/hdfs/lib/jersey-server-1.9.jar:/hadoop/hadoop-2.2.0/share/hadoop/hdfs/hadoop-hdfs-2.2.0-tests.jar:/hadoop/hadoop-2.2.0/share/hadoop/hdfs/hadoop-hdfs-nfs-2.2.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/hdfs/hadoop-hdfs-2.2.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/lib/aopalliance-1.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/lib/jersey-core-1.9.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/lib/log4j-1.2.17.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/lib/paranamer-2.3.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/lib/hadoop-annotations-2.2.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/lib/javax.inject-1.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/lib/commons-io-2.1.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/lib/jackson-core-asl-1.8.8.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/lib/hamcrest-core-1.1.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/lib/asm-3.2.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/lib/junit-4.10.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/lib/snappy-java-1.0.4.1.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/lib/netty-3.6.2.Final.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/lib/xz-1.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/lib/jersey-guice-1.9.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/lib/guice-3.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/lib/jackson-mapper-asl-1.8.8.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/lib/protobuf-java-2.5.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/lib/avro-1.7.4.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/lib/guice-servlet-3.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/lib/commons-compress-1.4.1.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/lib/jersey-server-1.9.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/hadoop-yarn-server-nodemanager-2.2.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/hadoop-yarn-applications-unmanaged-am-launcher-2.2.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/hadoop-yarn-server-tests-2.2.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/hadoop-yarn-server-common-2.2.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/hadoop-yarn-server-resourcemanager-2.2.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/hadoop-yarn-client-2.2.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/hadoop-yarn-server-web-proxy-2.2.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/hadoop-yarn-common-2.2.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/hadoop-yarn-applications-distributedshell-2.2.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/hadoop-yarn-api-2.2.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/yarn/hadoop-yarn-site-2.2.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/mapreduce/lib/aopalliance-1.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/mapreduce/lib/jersey-core-1.9.jar:/hadoop/hadoop-2.2.0/share/hadoop/mapreduce/lib/log4j-1.2.17.jar:/hadoop/hadoop-2.2.0/share/hadoop/mapreduce/lib/paranamer-2.3.jar:/hadoop/hadoop-2.2.0/share/hadoop/mapreduce/lib/hadoop-annotations-2.2.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/mapreduce/lib/javax.inject-1.jar:/hadoop/hadoop-2.2.0/share/hadoop/mapreduce/lib/commons-io-2.1.jar:/hadoop/hadoop-2.2.0/share/hadoop/mapreduce/lib/jackson-core-asl-1.8.8.jar:/hadoop/hadoop-2.2.0/share/hadoop/mapreduce/lib/hamcrest-core-1.1.jar:/hadoop/hadoop-2.2.0/share/hadoop/mapreduce/lib/asm-3.2.jar:/hadoop/hadoop-2.2.0/share/hadoop/mapreduce/lib/junit-4.10.jar:/hadoop/hadoop-2.2.0/share/hadoop/mapreduce/lib/snappy-java-1.0.4.1.jar:/hadoop/hadoop-2.2.0/share/hadoop/mapreduce/lib/netty-3.6.2.Final.jar:/hadoop/hadoop-2.2.0/share/hadoop/mapreduce/lib/xz-1.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/mapreduce/lib/jersey-guice-1.9.jar:/hadoop/hadoop-2.2.0/share/hadoop/mapreduce/lib/guice-3.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/mapreduce/lib/jackson-mapper-asl-1.8.8.jar:/hadoop/hadoop-2.2.0/share/hadoop/mapreduce/lib/protobuf-java-2.5.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/mapreduce/lib/avro-1.7.4.jar:/hadoop/hadoop-2.2.0/share/hadoop/mapreduce/lib/guice-servlet-3.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/mapreduce/lib/commons-compress-1.4.1.jar:/hadoop/hadoop-2.2.0/share/hadoop/mapreduce/lib/jersey-server-1.9.jar:/hadoop/hadoop-2.2.0/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.2.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.2.0-tests.jar:/hadoop/hadoop-2.2.0/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-plugins-2.2.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.2.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/mapreduce/hadoop-mapreduce-client-shuffle-2.2.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-2.2.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/mapreduce/hadoop-mapreduce-client-common-2.2.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/mapreduce/hadoop-mapreduce-client-core-2.2.0.jar:/hadoop/hadoop-2.2.0/share/hadoop/mapreduce/hadoop-mapreduce-client-app-2.2.0.jar:/hadoop/hadoop-2.2.0/contrib/capacity-scheduler/*.jar

STARTUP_MSG: build = https://svn.apache.org/repos/asf/hadoop/common -r 1529768; compiled by ‘hortonmu’ on 2013-10-07T06:28Z

STARTUP_MSG: java = 1.7.0_51

************************************************************/

14/03/24 15:18:29 INFO namenode.NameNode: registered UNIX signal handlers for [TERM, HUP, INT]

14/03/24 15:18:29 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform… using builtin-java classes where applicable

14/03/24 15:18:30 WARN common.Util: Path /dfs/name should be specified as a URI in configuration files. Please update hdfs configuration.

14/03/24 15:18:30 WARN common.Util: Path /dfs/name should be specified as a URI in configuration files. Please update hdfs configuration.

Formatting using clusterid: CID-27a0d847-6b78-4748-abd9-a78e970f18e7

14/03/24 15:18:30 INFO namenode.HostFileManager: read includes:

HostSet(

)

14/03/24 15:18:30 INFO namenode.HostFileManager: read excludes:

HostSet(

)

14/03/24 15:18:30 INFO blockmanagement.DatanodeManager: dfs.block.invalidate.limit=1000

14/03/24 15:18:30 INFO util.GSet: Computing capacity for map BlocksMap

14/03/24 15:18:30 INFO util.GSet: VM type = 32-bit

14/03/24 15:18:30 INFO util.GSet: 2.0% max memory = 966.7 MB

14/03/24 15:18:30 INFO util.GSet: capacity = 2^22 = 4194304 entries

14/03/24 15:18:30 INFO blockmanagement.BlockManager: dfs.block.access.token.enable=false

14/03/24 15:18:30 INFO blockmanagement.BlockManager: defaultReplication = 1

14/03/24 15:18:30 INFO blockmanagement.BlockManager: maxReplication = 512

14/03/24 15:18:30 INFO blockmanagement.BlockManager: minReplication = 1

14/03/24 15:18:30 INFO blockmanagement.BlockManager: maxReplicationStreams = 2

14/03/24 15:18:30 INFO blockmanagement.BlockManager: shouldCheckForEnoughRacks = false

14/03/24 15:18:30 INFO blockmanagement.BlockManager: replicationRecheckInterval = 3000

14/03/24 15:18:30 INFO blockmanagement.BlockManager: encryptDataTransfer = false

14/03/24 15:18:30 INFO namenode.FSNamesystem: fsOwner = root (auth:SIMPLE)

14/03/24 15:18:30 INFO namenode.FSNamesystem: supergroup = supergroup

14/03/24 15:18:30 INFO namenode.FSNamesystem: isPermissionEnabled = true

14/03/24 15:18:30 INFO namenode.FSNamesystem: HA Enabled: false

14/03/24 15:18:30 INFO namenode.FSNamesystem: Append Enabled: true

14/03/24 15:18:30 INFO util.GSet: Computing capacity for map INodeMap

14/03/24 15:18:30 INFO util.GSet: VM type = 32-bit

14/03/24 15:18:30 INFO util.GSet: 1.0% max memory = 966.7 MB

14/03/24 15:18:30 INFO util.GSet: capacity = 2^21 = 2097152 entries

14/03/24 15:18:30 INFO namenode.NameNode: Caching file names occuring more than 10 times

14/03/24 15:18:30 INFO namenode.FSNamesystem: dfs.namenode.safemode.threshold-pct = 0.9990000128746033

14/03/24 15:18:30 INFO namenode.FSNamesystem: dfs.namenode.safemode.min.datanodes = 0

14/03/24 15:18:30 INFO namenode.FSNamesystem: dfs.namenode.safemode.extension = 30000

14/03/24 15:18:30 INFO namenode.FSNamesystem: Retry cache on namenode is enabled

14/03/24 15:18:30 INFO namenode.FSNamesystem: Retry cache will use 0.03 of total heap and retry cache entry expiry time is 600000 millis

14/03/24 15:18:30 INFO util.GSet: Computing capacity for map Namenode Retry Cache

14/03/24 15:18:30 INFO util.GSet: VM type = 32-bit

14/03/24 15:18:30 INFO util.GSet: 0.029999999329447746% max memory = 966.7 MB

14/03/24 15:18:30 INFO util.GSet: capacity = 2^16 = 65536 entries

14/03/24 15:18:30 INFO common.Storage: Storage directory /dfs/name has been successfully formatted.

14/03/24 15:18:30 INFO namenode.FSImage: Saving image file /dfs/name/current/fsimage.ckpt_0000000000000000000 using no compression

14/03/24 15:18:30 INFO namenode.FSImage: Image file /dfs/name/current/fsimage.ckpt_0000000000000000000 of size 196 bytes saved in 0 seconds.

14/03/24 15:18:30 INFO namenode.NNStorageRetentionManager: Going to retain 1 images with txid >= 0

14/03/24 15:18:30 INFO util.ExitUtil: Exiting with status 0

14/03/24 15:18:30 INFO namenode.NameNode: SHUTDOWN_MSG:

/************************************************************

SHUTDOWN_MSG: Shutting down NameNode at hadoop/192.168.47.74

************************************************************/

hadoop 2.2.0 可以使用 start-all.sh 来吧所有的进程开启

在浏览器中输入 http://localhost:8088 登入 hadoop 管理界面

在换一个端口 50070,查看节点信息

部署完成,这里我们就是实现了伪分布式单机 hadoop 的开发环境。后续发力会出 hadoop 分布式文件系统,感受一下 google 的文件系统,其实和 linux 的 GFS 差不多。。

更多 Hadoop 相关信息见Hadoop 专题页面 http://www.linuxidc.com/topicnews.aspx?tid=13

最近一直在自学 Hadoop,今天花点时间搭建一个开发环境,并整理成文。

首先要了解一下 Hadoop 的运行模式:

单机模式(standalone)

单机模式是 Hadoop 的默认模式。当首次解压 Hadoop 的源码包时,Hadoop 无法了解硬件安装环境,便保守地选择了最小配置。在这种默认模式下所有 3 个 XML 文件均为空。当配置文件为空时,Hadoop 会完全运行在本地。因为不需要与其他节点交互,单机模式就不使用 HDFS,也不加载任何 Hadoop 的守护进程。该模式主要用于开发调试 MapReduce 程序的应用逻辑。

伪分布模式(Pseudo-Distributed Mode)

伪分布模式在“单节点集群”上运行 Hadoop,其中所有的守护进程都运行在同一台机器上。该模式在单机模式之上增加了代码调试功能,允许你检查内存使用情况,HDFS 输入输出,以及其他的守护进程交互。

全分布模式(Fully Distributed Mode)

————————————– 分割线 ————————————–

Ubuntu 13.04 上搭建 Hadoop 环境 http://www.linuxidc.com/Linux/2013-06/86106.htm

Ubuntu 12.10 +Hadoop 1.2.1 版本集群配置 http://www.linuxidc.com/Linux/2013-09/90600.htm

Ubuntu 上搭建 Hadoop 环境(单机模式 + 伪分布模式)http://www.linuxidc.com/Linux/2013-01/77681.htm

Ubuntu 下 Hadoop 环境的配置 http://www.linuxidc.com/Linux/2012-11/74539.htm

单机版搭建 Hadoop 环境图文教程详解 http://www.linuxidc.com/Linux/2012-02/53927.htm

Hadoop LZO 安装教程 http://www.linuxidc.com/Linux/2013-01/78397.htm

Hadoop 集群上使用 Lzo 压缩 http://www.linuxidc.com/Linux/2012-05/60554.htm

————————————– 分割线 ————————————–

1. 安装 JDK,并指定系统默认 jdk 为 Oracle 的版本,默认安装到的目录为 /usr/java/jdk1.7.0_45,下文将其设置为

JAVA_HOME

rpm -ivh jdk-7u51-linux-i586.rpm

vi jdk_install.sh

最后 source jdk_install.sh, 完成配置,查看配置完成的方法 java -version , 一定要确保不是 openJDK。

修改环境变量, 建议是在 etc/profile.d 中重新建立一个文件 shell 脚本来安装 jdk

#This is a shell file for Java Environment Installation

export JAVA_HOME=/usr/java/jdk1.7.0_45

PATH=$JAVA_HOME/bin:$PATH

export JRE_HOME=$JAVA_HOME/jre

export CLASSPATH=./:$JAVA_HOME/lib:$JAVA_HOME/jre/lib

2. 配置 hadoop 环境变量

#set hadoop2.2.0 environment

export HADOOP_HOME=/hadoop/hadoop-2.2.0

export PATH=$PATH:$HADOOP_HOME/bin

export PATH=$PATH:$HADOOP_HOME/sbin

3.hadoop 安装包解压

tar -zcvf hadoop-2.2.0.tar.gz

4. 修改 XML 文件,hadoop 的文件文件在 hadoop 文件目录 etc/hadoop 中,添加下面内容

vim core_site.xml

vim hdfs-site.xml

这里是对 namenode 和 daatanode 存储路径的设置。

vim mapred-site.xml

最后在 hadoop-env.sh 这个文件中加入 jdk 的指定,在文件最后加入 export JAVA_HOME=/usr/java/jdk1.7.0_45 也就是你的 jdk 目录

更多详情见请继续阅读下一页的精彩内容:http://www.linuxidc.com/Linux/2014-05/101683p2.htm