共计 19202 个字符,预计需要花费 49 分钟才能阅读完成。

| Active NN | Standby NN | DN | JournalNode | Zookeeper | FailoverController | |

| master | V | V | V | V | ||

| slave1 | V | V | V | V | V | |

| slave2 | V | V | V | |||

| slave3 | V |

——————————————————————————–

Ubuntu 13.04 上搭建 Hadoop 环境 http://www.linuxidc.com/Linux/2013-06/86106.htm

Ubuntu 12.10 +Hadoop 1.2.1 版本集群配置 http://www.linuxidc.com/Linux/2013-09/90600.htm

Ubuntu 上搭建 Hadoop 环境(单机模式 + 伪分布模式)http://www.linuxidc.com/Linux/2013-01/77681.htm

Ubuntu 下 Hadoop 环境的配置 http://www.linuxidc.com/Linux/2012-11/74539.htm

单机版搭建 Hadoop 环境图文教程详解 http://www.linuxidc.com/Linux/2012-02/53927.htm

搭建 Hadoop 环境(在 Winodws 环境下用虚拟机虚拟两个 Ubuntu 系统进行搭建)http://www.linuxidc.com/Linux/2011-12/48894.htm

——————————————————————————–

1. 下载稳定版 Zookeeper

5. 修改 hdfs-site.xml

更多详情见请继续阅读下一页的精彩内容:http://www.linuxidc.com/Linux/2014-09/106292p2.htm

6. 将修改好的 core-site.xml 和 hdfs-site.xml 拷贝到各个 Hadoop 节点。

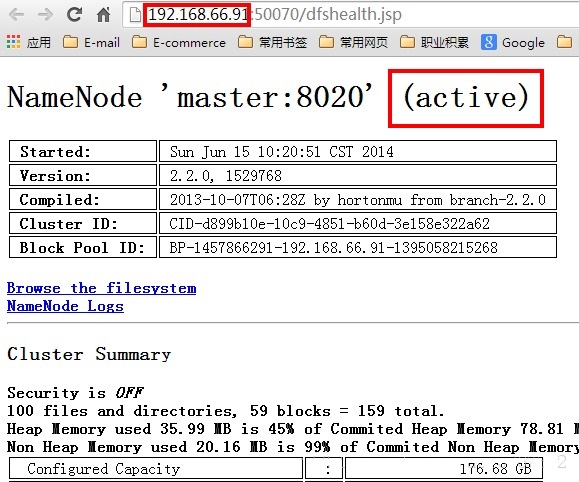

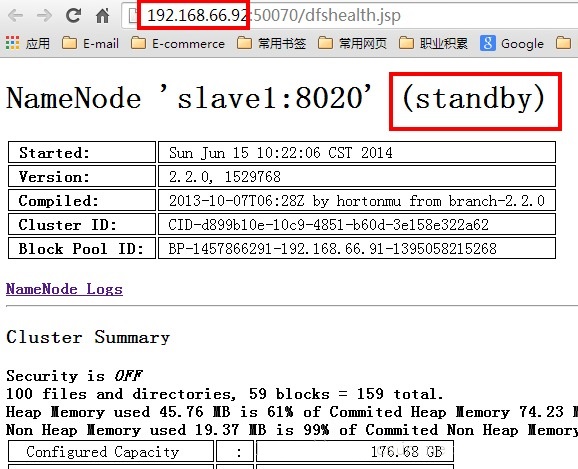

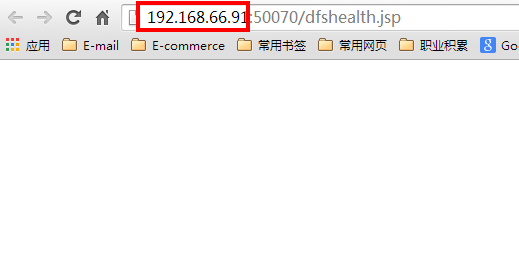

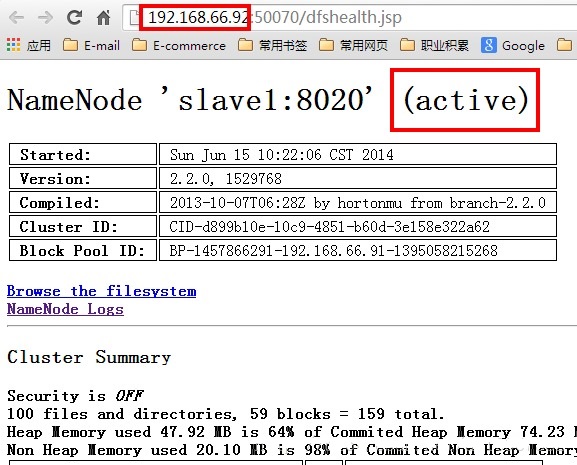

8. 效果验证 1 – 主备自动切换

10. 效果验证 3 –HA 对 Client 程序的透明性

更多 Hadoop 相关信息见Hadoop 专题页面 http://www.linuxidc.com/topicnews.aspx?tid=13

| Active NN | Standby NN | DN | JournalNode | Zookeeper | FailoverController | |

| master | V | V | V | V | ||

| slave1 | V | V | V | V | V | |

| slave2 | V | V | V | |||

| slave3 | V |

——————————————————————————–

Ubuntu 13.04 上搭建 Hadoop 环境 http://www.linuxidc.com/Linux/2013-06/86106.htm

Ubuntu 12.10 +Hadoop 1.2.1 版本集群配置 http://www.linuxidc.com/Linux/2013-09/90600.htm

Ubuntu 上搭建 Hadoop 环境(单机模式 + 伪分布模式)http://www.linuxidc.com/Linux/2013-01/77681.htm

Ubuntu 下 Hadoop 环境的配置 http://www.linuxidc.com/Linux/2012-11/74539.htm

单机版搭建 Hadoop 环境图文教程详解 http://www.linuxidc.com/Linux/2012-02/53927.htm

搭建 Hadoop 环境(在 Winodws 环境下用虚拟机虚拟两个 Ubuntu 系统进行搭建)http://www.linuxidc.com/Linux/2011-12/48894.htm

——————————————————————————–

1. 下载稳定版 Zookeeper

5. 修改 hdfs-site.xml

更多详情见请继续阅读下一页的精彩内容:http://www.linuxidc.com/Linux/2014-09/106292p2.htm