共计 4528 个字符,预计需要花费 12 分钟才能阅读完成。

| 导读 | 最近遇到一个问题,系统不能睡眠到 c7s, 只能睡眠到 c3. (c-state 不能到 c7s, cpu 的 c -state, c0 是运行态,其它状态都是 idle 态,睡眠的越深,c-state 的值越大)。 |

发现问题后,我的第一感觉是不是系统很忙导致, 使用 pert top 看一下耗 cpu 的进程和热点函数:

perf top -E 100 --stdio > perf-top.txt

19.85% perf [.] __symbols__insert

7.68% perf [.] rb_next

4.60% libc-2.26.so [.] __strcmp_sse2_unaligned

4.20% libelf-0.168.so [.] gelf_getsym

3.92% perf [.] dso__load_sym

3.86% libc-2.26.so [.] _int_malloc

3.60% libc-2.26.so [.] __libc_calloc

3.30% libc-2.26.so [.] vfprintf

2.95% perf [.] rb_insert_color

2.61% [kernel] [k] prepare_exit_to_usermode

2.51% perf [.] machine__map_x86_64_entry_trampolines

2.31% perf [.] symbol__new

2.22% [kernel] [k] do_syscall_64

2.11% libc-2.26.so [.] __strlen_avx2发现系统中只有 perf 工具本身比较耗 cpu :(

然后就想到是不是系统中某个进程搞的鬼,不让 cpu 睡眠到 c7s. 这时候使用 trace event 监控一下系统中 sched_switch 事件. 使用 trace-cmd 工具监控所有 cpu 上的 sched_switch(进程切换) 事件 30 秒:

#trace-cmd record -e sched:sched_switch -M -1 sleep 30

CPU0 data recorded at offset=0x63e000

102400 bytes in size

CPU1 data recorded at offset=0x657000

8192 bytes in size

CPU2 data recorded at offset=0x659000

20480 bytes in size

CPU3 data recorded at offset=0x65e000

20480 bytes in size使用 trace-cmd report 查看一下监控结果,但是查看这样的原始数据不够直观,没有某个进程被切换到的统计信息:

#trace-cmd report

cpus=4

trace-cmd-19794 [001] 225127.464466: sched_switch: trace-cmd:19794 [120] S ==> swapper/1:0 [120]

trace-cmd-19795 [003] 225127.464601: sched_switch: trace-cmd:19795 [120] S ==> swapper/3:0 [120]

sleep-19796 [002] 225127.464792: sched_switch: sleep:19796 [120] S ==> swapper/2:0 [120]

-0 [003] 225127.471948: sched_switch: swapper/3:0 [120] R ==> rcu_sched:11 [120]

rcu_sched-11 [003] 225127.471950: sched_switch: rcu_sched:11 [120] W ==> swapper/3:0 [120]

-0 [003] 225127.479959: sched_switch: swapper/3:0 [120] R ==> rcu_sched:11 [120]

rcu_sched-11 [003] 225127.479960: sched_switch: rcu_sched:11 [120] W ==> swapper/3:0 [120]

-0 [003] 225127.487959: sched_switch: swapper/3:0 [120] R ==> rcu_sched:11 [120]

rcu_sched-11 [003] 225127.487961: sched_switch: rcu_sched:11 [120] W ==> swapper/3:0 [120]

-0 [002] 225127.491959: sched_switch: swapper/2:0 [120] R ==> kworker/2:2:19735 [120]

kworker/2:2-19735 [002] 225127.491972: sched_switch: kworker/2:2:19735 [120] W ==> swapper/2:0 [120]

trace-cmd report 的结果使用正则表达式过滤一下,然后排序统计:

trace-cmd report | grep -o '==> [^]\+:\?' | sort | uniq -c

3 ==> irqbalance:1034

3 ==> khugepaged:43

20 ==> ksoftirqd/0:10

1 ==> ksoftirqd/1:18

18 ==> ksoftirqd/3:30

1 ==> kthreadd:19798

1 ==> kthreadd:2

4 ==> kworker/0:0:19785

1 ==> kworker/0:1:19736

5 ==> kworker/0:1:19798

5 ==> kworker/0:1H:364

53 ==> kworker/0:2:19614

19 ==> kworker/1:1:7665

30 ==> tuned:19498

...发现可疑线程 tuned,30 秒内被切换到运行了 30 次,其它线程都是常规线程。

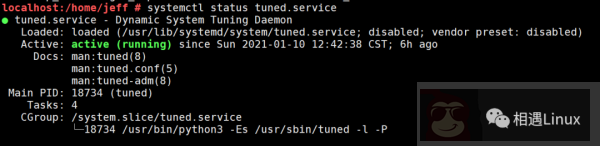

此时查看一下系统中是否开启了 tuned 服务:

果真是系统开启了 tuned 服务,然后拉起了名字为 tuned 的线程.

查看一下 tuned 服务的配置文件:

localhost:/home/jeff # tuned-adm active

Current active profile: sap-hana

localhost:/home/jeff # cat /usr/lib/tuned/sap-hana/tuned.conf

[main]

summary=Optimize for SAP NetWeaver, SAP HANA and HANA based products

[cpu]

force_latency = 70发现关于 cpu 这一项,设置强制延迟时间为 70 秒 force_latency = 70 , 这个是为了优化 HANA 数据库。

到底 force_latency 怎样起作用,经过一顿搜索,发现这个值是被设置进了 /dev/cpu_dma_latency

使用 lsof /dev/cpu_dma_latency, 发现 tuned 线程确实是在操作这个文件

#lsof /dev/cpu_dma_latency

COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME

tuned 18734 root 9w CHR 10,60 0t0 11400 /dev/cpu_dma_latency而且 Linux 内核文档也说明了 /dev/cpu_dma_latency 文件, 如果要对它进行写操作,要 open 之后写数据之后不 close, 如果释放掉了文件描述符它就又会恢复到默认值,这也印证了上面 lsof /dev/cpu_dma_latency 是有输出结果的.

https://github.com/torvalds/linux/blob/v5.8/Documentation/trace/coresight/coresight-cpu-debug.rst

As specified in the PM QoS documentation the requested parameter

will stay in effect until the file descriptor is released. For example:

# exec 3 /dev/cpu_dma_latency; echo 0 >&3

...

Do some work...

...

# exec 3-查看一下 /dev/cpu_dma_latency 文件的内容,确实是 70,也就是 (force_latency = 70)

localhost:/home/jeff # cat /dev/cpu_dma_latency | hexdump -Cv

00000000 46 00 00 00 |F...|

localhost:/home/jeff # echo $((0x46))

70此时查看一下系统中 cpu 各个睡眠态的描述和延迟时间值:

# cd /sys/devices/system/cpu/cpu0/cpuidle/

# for state in * ; do

echo -e \

"STATE: $state\t\

DESC: $(cat $state/desc)\t\

NAME: $(cat $state/name)\t\

LATENCY: $(cat $state/latency)\t\

RESIDENCY: $(cat $state/residency)"

done发现 C3 态的延迟时间是 33 微秒,C4 的延时时间是 133 微秒,所以 (force_latency = 70),

系统就只能睡眠到 C3 了 .(延迟时间就是从此睡眠态唤醒到运行态的时间)

STATE: state0 DESC: CPUIDLE CORE POLL IDLE NAME: POLL LATENCY: 0 RESIDENCY: 0

STATE: state1 DESC: MWAIT 0x00 NAME: C1 LATENCY: 2 RESIDENCY: 2

STATE: state2 DESC: MWAIT 0x01 NAME: C1E LATENCY: 10 RESIDENCY: 20

STATE: state3 DESC: MWAIT 0x10 NAME: C3 LATENCY: 33 RESIDENCY: 100

STATE: state4 DESC: MWAIT 0x20 NAME: C6 LATENCY: 133 RESIDENCY: 400

STATE: state5 DESC: MWAIT 0x32 NAME: C7s LATENCY: 166 RESIDENCY: 500此时关闭 tuned 服务, 再查看一下 /dev/cpu_dma_latency 的值,变成了默认的 2000 秒

localhost:/home/jeff # tuned-adm off

localhost:/home/jeff # cat /dev/cpu_dma_latency | hexdump -Cv

00000000 00 94 35 77 |..5w|

localhost:/home/jeff # echo $((0x77359400))

2000000000然后验证一下,此时系统可以睡眠到 C7s 了,此问题得到解决 :)

解决此问题,主要用到了 Linux 内核本身提供的 trace-event.

所以任何一个功能都不能小看,内核就是这样,一般看上去很无聊的功能,被一些工程师用很认真的态度打磨出来之后,潜力还是非常大的:)