共计 25402 个字符,预计需要花费 64 分钟才能阅读完成。

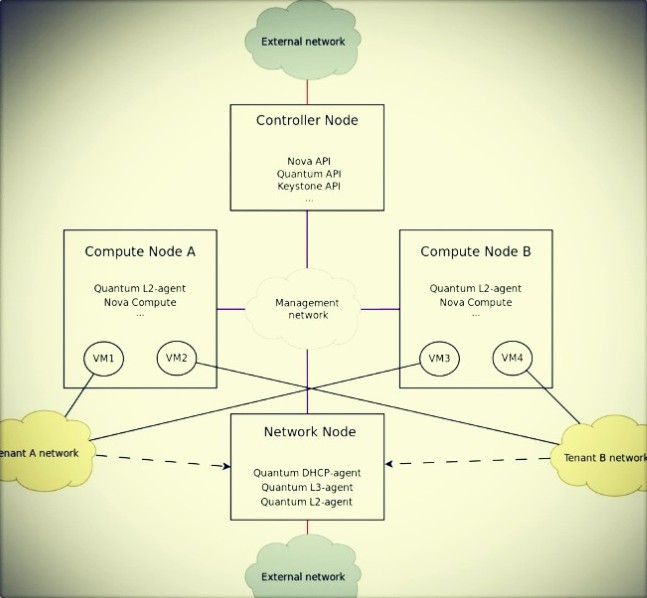

我这里用三台机器来部署, 你也可以横向扩展计算节点, 下面是网络情况:

control node: eth0(172.16.0.51), eth1(192.168.8.51)

network node : eth0(172.16.0.52), eth1(10.10.10.52), eth2(192.168.8.52)

compute node : eth0(172.16.0.53), eth1(10.10.10.53)

管理网络:172.16.0.0/16

业务网络:10.10.10.0/24

外部网络:192.168.8.0/24

下面是引用 mirantis 的一张图:

这里我的三个节点的网卡都连在了一个交换机上。因为我没有做 Grizzly 的本地 apt 源,计算节点还需要去公网 apt-get 包,所以我会在计算节点上临时设置一个虚拟网卡让它来装包。

文档更新:

2013.04.01 在计算节点上安装了 nova-compute 和 nova-conductor,而 nova-conductor 只需在控制节点安装就行了。同时发现网络节点在重启机器后,eth2 网卡没有激活,需要手工 up, 添加命令到 rc.local 中。

目录

- 1 控制节点

- 1.1 网络设置

- 1.2 添加源

- 1.3 MySQL & RabbitMQ

- 1.4 NTP

- 1.5 Keystone

- 1.6 Glance

- 1.7 Cinder

- 1.8 Quantum

- 1.9 Nova

- 1.10 Horizon

- 2 网络节点

- 2.1 网络设置

- 2.2 添加源

- 2.3 OpenVSwitch

- 2.4 Quantum

- 3 计算节点

- 3.1 网络设置

- 3.2 添加源

- 3.3 OpenVSwitch

- 3.4 Quantum

- 3.5 Nova

- 4 开始创建 vm

控制节点

网络设置

| cat /etc/network/interfaces | |

| auto eth0 | |

| iface eth0 inet static | |

| address 172.16.0.51 | |

| netmask 255.255.0.0 | |

| auto eth1 | |

| iface eth1 inet static | |

| address 192.168.8.51 | |

| netmask 255.255.255.0 | |

| gateway 192.168.8.1 | |

| dns-nameservers 8.8.8.8 |

添加源

添加 Grizzly 源,并升级系统

| cat > /etc/apt/sources.list.d/grizzly.list << _GEEK_ | |

| deb http://Ubuntu-cloud.archive.canonical.com/ubuntu precise-updates/grizzly main | |

| deb http://ubuntu-cloud.archive.canonical.com/ubuntu precise-proposed/grizzly main | |

| _GEEK_ | |

| apt-get update | |

| apt-get upgrade | |

| apt-get install ubuntu-cloud-keyring |

MySQL & RabbitMQ

- 安装 MySQL:

apt-get install mysql-server Python-mysqldb

- 使用 sed 编辑 /etc/mysql/my.cnf 文件的更改绑定地址(0.0.0.0)从本地主机(127.0.0.1)

禁止 mysql 做域名解析,防止 连接 mysql 出现错误和远程连接 mysql 慢的现象。

然后重新启动 mysql 服务.

| sed -i 's/127.0.0.1/0.0.0.0/g' /etc/mysql/my.cnf | |

| sed -i '44 i skip-name-resolve' /etc/mysql/my.cnf | |

| /etc/init.d/mysql restart |

- 安装 RabbitMQ:

apt-get install rabbitmq-server

NTP

- 安装 NTP 服务

apt-get install ntp

- 配置 NTP 服务器计算节点控制器节点之间的同步:

| sed -i 's/server ntp.ubuntu.com/server ntp.ubuntu.com\nserver 127.127.1.0\nfudge 127.127.1.0 stratum 10/g' /etc/ntp.conf | |

| service ntp restart |

- 开启路由转发

| vim /etc/sysctl.conf | |

| net.ipv4.ip_forward=1 |

Keystone

- 安装 Keystone

apt-get install keystone

- 在 mysql 里创建 keystone 数据库并授权:

| mysql -uroot -p | |

| create database keystone; | |

| grant all on keystone.* to 'keystone'@'%' identified by 'keystone'; | |

| quit; |

- 修改 /etc/keystone/keystone.conf 配置文件:

| admin_token = www.longgeek.com | |

| debug = True | |

| verbose = True | |

| [sql] | |

| connection = mysql://keystone:keystone@172.16.0.51/keystone #必须写到 [sql] 下面 | |

| [signing] | |

| token_format = UUID |

- 启动 keystone 然后同步数据库

| /etc/init.d/keystone restart | |

| keystone-manage db_sync |

- 用脚本导入数据:

用脚本来创建 user、role、tenant、service、endpoint,下载脚本:

wget http://download.longgeek.com/openstack/grizzly/keystone.sh

修改脚本内容:

| ADMIN_PASSWORD=${ADMIN_PASSWORD:-password} #租户 admin 的密码 | |

| SERVICE_PASSWORD=${SERVICE_PASSWORD:-password} #nova,glance,cinder,quantum,swift 的密码 | |

| export SERVICE_TOKEN="www.longgeek.com" # token | |

| export SERVICE_ENDPOINT="http://172.16.0.51:35357/v2.0" | |

| SERVICE_TENANT_NAME=${SERVICE_TENANT_NAME:-service} #租户 service,包含了 nova,glance,ciner,quantum,swift 等服务 | |

| KEYSTONE_REGION=RegionOne | |

| KEYSTONE_IP="172.16.0.51" | |

| #KEYSTONE_WLAN_IP="172.16.0.51" | |

| SWIFT_IP="172.16.0.51" | |

| #SWIFT_WLAN_IP="172.16.0.51" | |

| COMPUTE_IP=$KEYSTONE_IP | |

| EC2_IP=$KEYSTONE_IP | |

| GLANCE_IP=$KEYSTONE_IP | |

| VOLUME_IP=$KEYSTONE_IP | |

| QUANTUM_IP=$KEYSTONE_IP |

执行脚本:

sh keystone.sh

- 设置环境变量:

这里变量对于 keystone.sh 里的设置:

| export OS_TENANT_NAME=admin #这里如果设置为 service 其它服务会无法验证. | |

| export OS_USERNAME=admin | |

| export OS_PASSWORD=password | |

| export OS_AUTH_URL=http://172.16.0.51:5000/v2.0/ | |

| export OS_REGION_NAME=RegionOne | |

| export SERVICE_TOKEN=www.longgeek.com | |

| export SERVICE_ENDPOINT=http://172.16.0.51:35357/v2.0/ | |

| _GEEK_ | |

- 验证 keystone 的安装,做一个简单测试:

| apt-get install curl openssl | |

| curl http://172.16.0.51:35357/v2.0/endpoints -H 'x-auth-token: www.longgeek.com' | python -mjson.tool |

更多精彩内容请看下一页 :http://www.linuxidc.com/Linux/2013-09/92123p2.htm

相关阅读 :

在 Ubuntu 12.10 上安装部署 Openstack http://www.linuxidc.com/Linux/2013-08/88184.htm

Ubuntu 12.04 OpenStack Swift 单节点部署手册 http://www.linuxidc.com/Linux/2013-08/88182.htm

OpenStack 云计算快速入门教程 http://www.linuxidc.com/Linux/2013-08/88186.htm

企业部署 OpenStack:该做与不该做的事 http://www.linuxidc.com/Linux/2013-09/90428.htm

Glance

- 安装 Glance

apt-get install glance

- 创建一个 glance 数据库并授权:

| mysql -uroot -p | |

| create database glance; | |

| grant all on glance.* to 'glance'@'%' identified by 'glance'; |

- 更新 /etc/glance/glance-api.conf 文件:

| verbose = True | |

| debug = True | |

| sql_connection = mysql://glance:glance@172.16.0.51/glance | |

| workers = 4 | |

| registry_host = 172.16.0.51 | |

| notifier_strategy = rabbit | |

| rabbit_host = 172.16.0.51 | |

| rabbit_userid = guest | |

| rabbit_password = guest | |

| [keystone_authtoken] | |

| auth_host = 172.16.0.51 | |

| auth_port = 35357 | |

| auth_protocol = http | |

| admin_tenant_name = service | |

| admin_user = glance | |

| admin_password = password | |

| [paste_deploy] | |

| config_file = /etc/glance/glance-api-paste.ini | |

| flavor = keystone |

- 更新 /etc/glance/glance-registry.conf 文件:

| verbose = True | |

| debug = True | |

| sql_connection = mysql://glance:glance@172.16.0.51/glance | |

| [keystone_authtoken] | |

| auth_host = 172.16.0.51 | |

| auth_port = 35357 | |

| auth_protocol = http | |

| admin_tenant_name = service | |

| admin_user = glance | |

| admin_password = password | |

| [paste_deploy] | |

| config_file = /etc/glance/glance-registry-paste.ini | |

| flavor = keystone |

- 启动 glance-api 和 glance-registry 服务并同步到数据库:

| /etc/init.d/glance-api restart | |

| /etc/init.d/glance-registry restart | |

| glance-manage version_control 0 | |

| glance-manage db_sync |

- 测试 glance 的安装,上传一个镜像。下载 Cirros 镜像并上传:

| wget https://launchpad.net/cirros/trunk/0.3.0/+download/cirros-0.3.0-x86_64-disk.img | |

| glance image-create --name='cirros' --public --container-format=ovf --disk-format=qcow2 < ./cirros-0.3.0-x86_64-disk.img |

- 查看上传的镜像:

glance image-list

Cinder

- 安装 Cinder 需要的包:

apt-get install cinder-api cinder-common cinder-scheduler cinder-volume Python-cinderclient iscsitarget open-iscsi iscsitarget-dkms

- 配置 iscsi 并启动服务:

| sed -i 's/false/true/g' /etc/default/iscsitarget | |

| /etc/init.d/iscsitarget restart | |

| /etc/init.d/open-iscsi restart |

- 创建 cinder 数据库并授权用户访问:

| mysql -uroot -p | |

| create database cinder; | |

| grant all on cinder.* to 'cinder'@'%' identified by 'cinder'; | |

| quit; |

- 修改 /etc/cinder/cinder.conf:

| cat /etc/cinder/cinder.conf | |

| [DEFAULT] | |

| # LOG/STATE | |

| verbose = True | |

| debug = False | |

| iscsi_helper = ietadm | |

| auth_strategy = keystone | |

| volume_group = cinder-volumes | |

| volume_name_template = volume-%s | |

| state_path = /var/lib/cinder | |

| volumes_dir = /var/lib/cinder/volumes | |

| rootwrap_config = /etc/cinder/rootwrap.conf | |

| api_paste_config = /etc/cinder/api-paste.ini | |

| # RPC | |

| rabbit_host = 172.16.0.51 | |

| rabbit_password = guest | |

| rpc_backend = cinder.openstack.common.rpc.impl_kombu | |

| # DATABASE | |

| sql_connection = mysql://cinder:cinder@172.16.0.51/cinder | |

| # API | |

| osapi_volume_extension = cinder.api.contrib.standard_extensions |

- 修改 /etc/cinder/api-paste.ini 文件末尾 [filter:authtoken] 字段 :

| [filter:authtoken] | |

| paste.filter_factory = keystoneclient.middleware.auth_token:filter_factory | |

| service_protocol = http | |

| service_host = 172.16.0.51 | |

| service_port = 5000 | |

| auth_host = 172.16.0.51 | |

| auth_port = 35357 | |

| auth_protocol = http | |

| admin_tenant_name = service | |

| admin_user = cinder | |

| admin_password = password | |

| signing_dir = /var/lib/cinder |

- 创建一个卷组,命名为 cinder-volumes:

这里用文件模拟分区。

| dd if=/dev/zero of=/opt/cinder-volumes bs=1 count=0 seek=5G | |

| losetup /dev/loop2 /opt/cinder-volumes | |

| fdisk /dev/loop2 | |

| #Type in the followings: | |

| n | |

| p | |

| 1 | |

| ENTER | |

| ENTER | |

| t | |

| 8e | |

| w |

分区现在有了,创建物理卷和卷组:

| pvcreate /dev/loop2 | |

| vgcreate cinder-volumes /dev/loop2 |

这个卷组在系统重启会失效,把它写到 rc.local 中:

echo 'losetup /dev/loop2 /opt/cinder-volumes' >> /etc/rc.local

- 同步数据库并重启服务:

| cinder-manage db sync | |

| /etc/init.d/cinder-api restart | |

| /etc/init.d/cinder-schduler restart | |

| /etc/init.d/cinder-volume restart |

Quantum

- 安装 Quantum server 和 OpenVSwitch 包:

apt-get install quantum-server quantum-plugin-openvswitch

- 创建 quantum 数据库并授权用户访问:

| mysql -uroot -p | |

| create database quantum; | |

| grant all on quantum.* to 'quantum'@'%' identified by 'quantum'; | |

| quit; |

- 编辑 OVS 插件配置文件 /etc/quantum/plugins/openvswitch/ovs_quantum_plugin.ini:

| [DATABASE] | |

| sql_connection = mysql://quantum:quantum@172.16.0.51/quantum | |

| reconnect_interval = 2 | |

| [OVS] | |

| tenant_network_type = gre | |

| enable_tunneling = True | |

| tunnel_id_ranges = 1:1000 | |

| [AGENT] | |

| polling_interval = 2 | |

| [SECURITYGROUP] |

- 编辑 /etc/quantum/quanqum.conf 文件:

| [DEFAULT] | |

| debug = True | |

| verbose = True | |

| state_path = /var/lib/quantum | |

| bind_host = 0.0.0.0 | |

| bind_port = 9696 | |

| core_plugin = quantum.plugins.openvswitch.ovs_quantum_plugin.OVSQuantumPluginV2 | |

| api_paste_config = /etc/quantum/api-paste.ini | |

| control_exchange = quantum | |

| rabbit_host = 172.16.0.51 | |

| rabbit_password = guest | |

| rabbit_port = 5672 | |

| rabbit_userid = guest | |

| notification_driver = quantum.openstack.common.notifier.rpc_notifier | |

| default_notification_level = INFO | |

| notification_topics = notifications | |

| [QUOTAS] | |

| [DEFAULT_SERVICETYPE] | |

| [SECURITYGROUP] | |

| [AGENT] | |

| root_helper = sudo quantum-rootwrap /etc/quantum/rootwrap.conf | |

| [keystone_authtoken] | |

| auth_host = 172.16.0.51 | |

| auth_port = 35357 | |

| auth_protocol = http | |

| admin_tenant_name = service | |

| admin_user = quantum | |

| admin_password = password | |

| signing_dir = /var/lib/quantum/keystone-signing |

- 启动 quantum 服务:

/etc/init.d/quantum-server restart

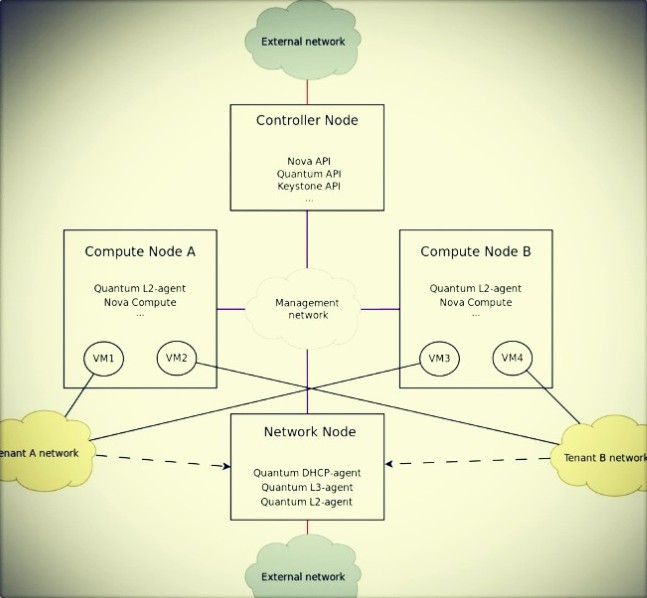

我这里用三台机器来部署, 你也可以横向扩展计算节点, 下面是网络情况:

control node: eth0(172.16.0.51), eth1(192.168.8.51)

network node : eth0(172.16.0.52), eth1(10.10.10.52), eth2(192.168.8.52)

compute node : eth0(172.16.0.53), eth1(10.10.10.53)

管理网络:172.16.0.0/16

业务网络:10.10.10.0/24

外部网络:192.168.8.0/24

下面是引用 mirantis 的一张图:

这里我的三个节点的网卡都连在了一个交换机上。因为我没有做 Grizzly 的本地 apt 源,计算节点还需要去公网 apt-get 包,所以我会在计算节点上临时设置一个虚拟网卡让它来装包。

文档更新:

2013.04.01 在计算节点上安装了 nova-compute 和 nova-conductor,而 nova-conductor 只需在控制节点安装就行了。同时发现网络节点在重启机器后,eth2 网卡没有激活,需要手工 up, 添加命令到 rc.local 中。

目录

- 1 控制节点

- 1.1 网络设置

- 1.2 添加源

- 1.3 MySQL & RabbitMQ

- 1.4 NTP

- 1.5 Keystone

- 1.6 Glance

- 1.7 Cinder

- 1.8 Quantum

- 1.9 Nova

- 1.10 Horizon

- 2 网络节点

- 2.1 网络设置

- 2.2 添加源

- 2.3 OpenVSwitch

- 2.4 Quantum

- 3 计算节点

- 3.1 网络设置

- 3.2 添加源

- 3.3 OpenVSwitch

- 3.4 Quantum

- 3.5 Nova

- 4 开始创建 vm

控制节点

网络设置

| cat /etc/network/interfaces | |

| auto eth0 | |

| iface eth0 inet static | |

| address 172.16.0.51 | |

| netmask 255.255.0.0 | |

| auto eth1 | |

| iface eth1 inet static | |

| address 192.168.8.51 | |

| netmask 255.255.255.0 | |

| gateway 192.168.8.1 | |

| dns-nameservers 8.8.8.8 |

添加源

添加 Grizzly 源,并升级系统

| cat > /etc/apt/sources.list.d/grizzly.list << _GEEK_ | |

| deb http://Ubuntu-cloud.archive.canonical.com/ubuntu precise-updates/grizzly main | |

| deb http://ubuntu-cloud.archive.canonical.com/ubuntu precise-proposed/grizzly main | |

| _GEEK_ | |

| apt-get update | |

| apt-get upgrade | |

| apt-get install ubuntu-cloud-keyring |

MySQL & RabbitMQ

- 安装 MySQL:

apt-get install mysql-server Python-mysqldb

- 使用 sed 编辑 /etc/mysql/my.cnf 文件的更改绑定地址(0.0.0.0)从本地主机(127.0.0.1)

禁止 mysql 做域名解析,防止 连接 mysql 出现错误和远程连接 mysql 慢的现象。

然后重新启动 mysql 服务.

| sed -i 's/127.0.0.1/0.0.0.0/g' /etc/mysql/my.cnf | |

| sed -i '44 i skip-name-resolve' /etc/mysql/my.cnf | |

| /etc/init.d/mysql restart |

- 安装 RabbitMQ:

apt-get install rabbitmq-server

NTP

- 安装 NTP 服务

apt-get install ntp

- 配置 NTP 服务器计算节点控制器节点之间的同步:

| sed -i 's/server ntp.ubuntu.com/server ntp.ubuntu.com\nserver 127.127.1.0\nfudge 127.127.1.0 stratum 10/g' /etc/ntp.conf | |

| service ntp restart |

- 开启路由转发

| vim /etc/sysctl.conf | |

| net.ipv4.ip_forward=1 |

Keystone

- 安装 Keystone

apt-get install keystone

- 在 mysql 里创建 keystone 数据库并授权:

| mysql -uroot -p | |

| create database keystone; | |

| grant all on keystone.* to 'keystone'@'%' identified by 'keystone'; | |

| quit; |

- 修改 /etc/keystone/keystone.conf 配置文件:

| admin_token = www.longgeek.com | |

| debug = True | |

| verbose = True | |

| [sql] | |

| connection = mysql://keystone:keystone@172.16.0.51/keystone #必须写到 [sql] 下面 | |

| [signing] | |

| token_format = UUID |

- 启动 keystone 然后同步数据库

| /etc/init.d/keystone restart | |

| keystone-manage db_sync |

- 用脚本导入数据:

用脚本来创建 user、role、tenant、service、endpoint,下载脚本:

wget http://download.longgeek.com/openstack/grizzly/keystone.sh

修改脚本内容:

| ADMIN_PASSWORD=${ADMIN_PASSWORD:-password} #租户 admin 的密码 | |

| SERVICE_PASSWORD=${SERVICE_PASSWORD:-password} #nova,glance,cinder,quantum,swift 的密码 | |

| export SERVICE_TOKEN="www.longgeek.com" # token | |

| export SERVICE_ENDPOINT="http://172.16.0.51:35357/v2.0" | |

| SERVICE_TENANT_NAME=${SERVICE_TENANT_NAME:-service} #租户 service,包含了 nova,glance,ciner,quantum,swift 等服务 | |

| KEYSTONE_REGION=RegionOne | |

| KEYSTONE_IP="172.16.0.51" | |

| #KEYSTONE_WLAN_IP="172.16.0.51" | |

| SWIFT_IP="172.16.0.51" | |

| #SWIFT_WLAN_IP="172.16.0.51" | |

| COMPUTE_IP=$KEYSTONE_IP | |

| EC2_IP=$KEYSTONE_IP | |

| GLANCE_IP=$KEYSTONE_IP | |

| VOLUME_IP=$KEYSTONE_IP | |

| QUANTUM_IP=$KEYSTONE_IP |

执行脚本:

sh keystone.sh

- 设置环境变量:

这里变量对于 keystone.sh 里的设置:

| export OS_TENANT_NAME=admin #这里如果设置为 service 其它服务会无法验证. | |

| export OS_USERNAME=admin | |

| export OS_PASSWORD=password | |

| export OS_AUTH_URL=http://172.16.0.51:5000/v2.0/ | |

| export OS_REGION_NAME=RegionOne | |

| export SERVICE_TOKEN=www.longgeek.com | |

| export SERVICE_ENDPOINT=http://172.16.0.51:35357/v2.0/ | |

| _GEEK_ | |

- 验证 keystone 的安装,做一个简单测试:

| apt-get install curl openssl | |

| curl http://172.16.0.51:35357/v2.0/endpoints -H 'x-auth-token: www.longgeek.com' | python -mjson.tool |

更多精彩内容请看下一页 :http://www.linuxidc.com/Linux/2013-09/92123p2.htm

相关阅读 :

在 Ubuntu 12.10 上安装部署 Openstack http://www.linuxidc.com/Linux/2013-08/88184.htm

Ubuntu 12.04 OpenStack Swift 单节点部署手册 http://www.linuxidc.com/Linux/2013-08/88182.htm

OpenStack 云计算快速入门教程 http://www.linuxidc.com/Linux/2013-08/88186.htm

企业部署 OpenStack:该做与不该做的事 http://www.linuxidc.com/Linux/2013-09/90428.htm

Nova

- 安装 Nova 相关软件包:

apt-get install nova-api nova-cert novnc nova-conductor nova-consoleauth nova-scheduler nova-novncproxy

- 创建 nova 数据库,授权 nova 用户访问它:

| mysql -uroot -p | |

| create database nova; | |

| grant all on nova.* to 'nova'@'%' identified by 'nova'; | |

| quit; |

- 在 /etc/nova/api-paste.ini 中修改 autotoken 验证部分:

| [filter:authtoken] | |

| paste.filter_factory = keystoneclient.middleware.auth_token:filter_factory | |

| auth_host = 172.16.0.51 | |

| auth_port = 35357 | |

| auth_protocol = http | |

| admin_tenant_name = service | |

| admin_user = nova | |

| admin_password = password | |

| signing_dir = /tmp/keystone-signing-nova | |

| # Workaround for https://bugs.launchpad.net/nova/+bug/1154809 | |

| auth_version = v2.0 |

- 修改 /etc/nova/nova.conf,类似下面这样:

| [DEFAULT] | |

| # LOGS/STATE | |

| debug = False | |

| verbose = True | |

| logdir = /var/log/nova | |

| state_path = /var/lib/nova | |

| lock_path = /var/lock/nova | |

| rootwrap_config = /etc/nova/rootwrap.conf | |

| dhcpbridge = /usr/bin/nova-dhcpbridge | |

| # SCHEDULER | |

| compute_scheduler_driver = nova.scheduler.filter_scheduler.FilterScheduler | |

| ## VOLUMES | |

| volume_api_class = nova.volume.cinder.API | |

| # DATABASE | |

| sql_connection = mysql://nova:nova@172.16.0.51/nova | |

| # COMPUTE | |

| libvirt_type = kvm | |

| compute_driver = libvirt.LibvirtDriver | |

| instance_name_template = instance-%08x | |

| api_paste_config = /etc/nova/api-paste.ini | |

| # COMPUTE/APIS: if you have separate configs for separate services | |

| # this flag is required for both nova-api and nova-compute | |

| allow_resize_to_same_host = True | |

| # APIS | |

| osapi_compute_extension = nova.api.openstack.compute.contrib.standard_extensions | |

| ec2_dmz_host = 172.16.0.51 | |

| s3_host = 172.16.0.51 | |

| metadata_host = 172.16.0.51 | |

| metadata_listen = 0.0.0.0 | |

| # RABBITMQ | |

| rabbit_host = 172.16.0.51 | |

| rabbit_password = guest | |

| # GLANCE | |

| image_service = nova.image.glance.GlanceImageService | |

| glance_api_servers = 172.16.0.51:9292 | |

| # NETWORK | |

| network_api_class = nova.network.quantumv2.api.API | |

| quantum_url = http://172.16.0.51:9696 | |

| quantum_auth_strategy = keystone | |

| quantum_admin_tenant_name = service | |

| quantum_admin_username = quantum | |

| quantum_admin_password = password | |

| quantum_admin_auth_url = http://172.16.0.51:35357/v2.0 | |

| libvirt_vif_driver = nova.virt.libvirt.vif.LibvirtHybridOVSBridgeDriver | |

| linuxnet_interface_driver = nova.network.linux_net.LinuxOVSInterfaceDriver | |

| firewall_driver = nova.virt.libvirt.firewall.IptablesFirewallDriver | |

| # NOVNC CONSOLE | |

| novncproxy_base_url = http://192.168.8.51:6080/vnc_auto.html | |

| # Change vncserver_proxyclient_address and vncserver_listen to match each compute host | |

| vncserver_proxyclient_address = 192.168.8.51 | |

| vncserver_listen = 0.0.0.0 | |

| # AUTHENTICATION | |

| auth_strategy = keystone | |

| [keystone_authtoken] | |

| auth_host = 172.16.0.51 | |

| auth_port = 35357 | |

| auth_protocol = http | |

| admin_tenant_name = service | |

| admin_user = nova | |

| admin_password = password | |

| signing_dir = /tmp/keystone-signing-nova |

- 同步数据库,启动 nova 相关服务:

| nova-manage db sync | |

| cd /etc/init.d/; for i in $(ls nova-*); do sudo /etc/init.d/$i restart; done |

- 检查 nova 相关服务笑脸

| nova-manage service list | |

| Binary Host Zone Status State Updated_At | |

| nova-consoleauth control internal enabled |

| 2013-03-31 09:55:43 | |

| nova-cert control internal enabled |

| 2013-03-31 09:55:42 | |

| nova-scheduler control internal enabled |

| 2013-03-31 09:55:41 | |

| nova-conductor control internal enabled |

2013-03-31 09:55:42

Horizon

- 安装 horizon:

apt-get install openstack-dashboard memcached

- 如果你不喜欢 Ubuntu 的主题,可以禁用它,使用默认界面:

| vim /etc/openstack-dashboard/local_settings.py | |

| # Enable the Ubuntu theme if it is present. | |

| #try: | |

| # from ubuntu_theme import * | |

| #except ImportError: | |

| # pass |

- 重新加载 apache2 和 memcache:

| /etc/init.d/apache2 restart | |

| /etc/init.d/memcached restart |

现在可以通过浏览器 http://192.168.8.51/horizon 使用 admin:password 来登录界面。

网络节点

网络设置

| # cat /etc/network/interfaces | |

| auto eth0 | |

| iface eth0 inet static | |

| address 172.16.0.52 | |

| netmask 255.255.0.0 | |

| auto eth1 | |

| iface eth1 inet static | |

| address 10.10.10.52 | |

| netmask 255.255.255.0 | |

| auto eth2 | |

| iface eth2 inet manual | |

| # /etc/init.d/networking restart | |

| # ifconfig eth2 192.168.8.52/24 up | |

| # route add default gw 192.168.8.1 dev eth2 | |

| # echo 'nameserver 8.8.8.8' > /etc/resolv.conf |

添加源

- 添加 Grizzly 源,并升级系统

| cat > /etc/apt/sources.list.d/grizzly.list << _GEEK_ | |

| deb http://ubuntu-cloud.archive.canonical.com/ubuntu precise-updates/grizzly main | |

| deb http://ubuntu-cloud.archive.canonical.com/ubuntu precise-proposed/grizzly main | |

| _GEEK_ | |

| apt-get update | |

| apt-get upgrade | |

| apt-get install ubuntu-cloud-keyring |

- 设置 ntp 和开启路由转发:

| # apt-get install ntp | |

| # sed -i 's/server ntp.ubuntu.com/server 172.16.0.51/g' /etc/ntp.conf | |

| # service ntp restart | |

| # vim /etc/sysctl.conf | |

| net.ipv4.ip_forward=1 | |

| # sysctl -p |

OpenVSwitch

- 安装 openVSwitch:

apt-get install openvswitch-switch openvswitch-brcompat

- 设置 ovs-brcompatd 启动:

sed -i 's/# BRCOMPAT=no/BRCOMPAT=yes/g' /etc/default/openvswitch-switch

- 启动 openvswitch-switch:

| /etc/init.d/openvswitch-switch restart | |

| * ovs-brcompatd is not running # brcompatd 没有启动, 尝试再次启动. | |

| * ovs-vswitchd is not running | |

| * ovsdb-server is not running | |

| * Inserting openvswitch module | |

| * /etc/openvswitch/conf.db does not exist | |

| * Creating empty database /etc/openvswitch/conf.db | |

| * Starting ovsdb-server | |

| * Configuring Open vSwitch system IDs | |

| * Starting ovs-vswitchd | |

| * Enabling gre with iptables |

- 再次启动, 直到 ovs-brcompatd、ovs-vswitchd、ovsdb-server 等服务都启动:

| # /etc/init.d/openvswitch-switch restart | |

| # lsmod | grep brcompat | |

| brcompat 13512 0 | |

| openvswitch 84038 7 brcompat |

- 如果还是启动不了 brcompat,执行下面命令:

/etc/init.d/openvswitch-switch force-reload-kmod

- 创建网桥:

| ovs-vsctl add-br br-int | |

| ovs-vsctl add-br br-ex | |

| ovs-vsctl add-port br-ex eth2 |

- 做完上面操作后,eth2 这个网卡是没有工作的,需修改网卡配置文件:

| # ifconfig eth2 0 | |

| # ifconfig br-ex 192.168.8.52/24 | |

| # route add default gw 192.168.8.1 dev br-ex | |

| # echo 'nameserver 8.8.8.8' > /etc/resolv.conf | |

| # vim /etc/network/interfaces | |

| auto eth0 | |

| iface eth0 inet static | |

| address 172.16.0.52 | |

| netmask 255.255.0.0 | |

| auto eth1 | |

| iface eth1 inet static | |

| address 10.10.10.52 | |

| netmask 255.255.255.0 | |

| auto eth2 | |

| iface eth2 inet manual | |

| up ifconfig $IFACE 0.0.0.0 up | |

| down ifconfig $IFACE down | |

| auto br-ex | |

| iface br-ex inet static | |

| address 192.168.8.52 | |

| netmask 255.255.255.0 | |

| gateway 192.168.8.1 | |

| dns-nameservers 8.8.8.8 |

- 重启网卡可能会出现:

| /etc/init.d/networking restart | |

| RTNETLINK answers: File exists | |

| Failed to bring up br-ex. |

br-ex 可能有 ip 地址,但没有网关和 DNS,需要手工配置一下,或者重启机器. 重启机器后就正常了

文档更新:发现网络节点的 eth2 网卡在系统重启后没有激活,写入到 rc.local 中:

echo 'ifconfig eth2 up' >> /etc/rc.local

- 查看桥接的网络

| ovs-vsctl list-br | |

| ovs-vsctl show |

Quantum

- 安装 Quantum openvswitch agent, l3 agent 和 dhcp agent:

apt-get install quantum-plugin-openvswitch-agent quantum-dhcp-agent quantum-l3-agent

- 更改 /etc/quantum/quantum.conf:

| [DEFAULT] | |

| debug = True | |

| verbose = True | |

| state_path = /var/lib/quantum | |

| lock_path = $state_path/lock | |

| bind_host = 0.0.0.0 | |

| bind_port = 9696 | |

| core_plugin = quantum.plugins.openvswitch.ovs_quantum_plugin.OVSQuantumPluginV2 | |

| api_paste_config = /etc/quantum/api-paste.ini | |

| control_exchange = quantum | |

| rabbit_host = 172.16.0.51 | |

| rabbit_password = guest | |

| rabbit_port = 5672 | |

| rabbit_userid = guest | |

| notification_driver = quantum.openstack.common.notifier.rpc_notifier | |

| default_notification_level = INFO | |

| notification_topics = notifications | |

| [QUOTAS] | |

| [DEFAULT_SERVICETYPE] | |

| [AGENT] | |

| root_helper = sudo quantum-rootwrap /etc/quantum/rootwrap.conf | |

| [keystone_authtoken] | |

| auth_host = 172.16.0.51 | |

| auth_port = 35357 | |

| auth_protocol = http | |

| admin_tenant_name = service | |

| admin_user = quantum | |

| admin_password = password | |

| signing_dir = /var/lib/quantum/keystone-signing |

- 编辑 OVS 插件配置文件 /etc/quantum/plugins/openvswitch/ovs_quantum_plugin.ini:

| [DATABASE] | |

| sql_connection = mysql://quantum:quantum@172.16.0.51/quantum | |

| reconnect_interval = 2 | |

| [OVS] | |

| enable_tunneling = True | |

| tenant_network_type = gre | |

| tunnel_id_ranges = 1:1000 | |

| local_ip = 10.10.10.52 | |

| integration_bridge = br-int | |

| tunnel_bridge = br-tun | |

| [AGENT] | |

| polling_interval = 2 | |

| [SECURITYGROUP] |

- 编辑 /etc/quantum/l3_agent.ini:

| [DEFAULT] | |

| debug = True | |

| verbose = True | |

| use_namespaces = True | |

| external_network_bridge = br-ex | |

| signing_dir = /var/cache/quantum | |

| admin_tenant_name = service | |

| admin_user = quantum | |

| admin_password = password | |

| auth_url = http://172.16.0.51:35357/v2.0 | |

| l3_agent_manager = quantum.agent.l3_agent.L3NATAgentWithStateReport | |

| root_helper = sudo quantum-rootwrap /etc/quantum/rootwrap.conf | |

| interface_driver = quantum.agent.linux.interface.OVSInterfaceDriver |

- 编辑 /etc/quantum/dhcp_agent.ini:

| [DEFAULT] | |

| debug = True | |

| verbose = True | |

| use_namespaces = True | |

| signing_dir = /var/cache/quantum | |

| admin_tenant_name = service | |

| admin_user = quantum | |

| admin_password = password | |

| auth_url = http://172.16.0.51:35357/v2.0 | |

| dhcp_agent_manager = quantum.agent.dhcp_agent.DhcpAgentWithStateReport | |

| root_helper = sudo quantum-rootwrap /etc/quantum/rootwrap.conf | |

| state_path = /var/lib/quantum | |

| interface_driver = quantum.agent.linux.interface.OVSInterfaceDriver | |

| dhcp_driver = quantum.agent.linux.dhcp.Dnsmasq |

- 编辑 /etc/quantum/metadata_agent.ini:

| [DEFAULT] | |

| debug = True | |

| auth_url = http://172.16.0.51:35357/v2.0 | |

| auth_region = RegionOne | |

| admin_tenant_name = service | |

| admin_user = quantum | |

| admin_password = password | |

| state_path = /var/lib/quantum | |

| nova_metadata_ip = 172.16.0.51 | |

| nova_metadata_port = 8775 |

- 启动 quantum 所有服务:

| service quantum-plugin-openvswitch-agent restart | |

| service quantum-dhcp-agent restart | |

| service quantum-l3-agent restart | |

| service quantum-metadata-agent restart |

计算节点

网络设置

| cat /etc/network/interfaces | |

| auto eth0 | |

| iface eth0 inet static | |

| address 172.16.0.53 | |

| netmask 255.255.0.0 | |

| auto eth1 | |

| iface eth1 inet static | |

| address 10.10.10.53 | |

| netmask 255.255.255.0 |

* 因为没有内网 apt 源,所以临时设置个虚拟网卡来 apt-get:

| ifconfig eth0:0 192.168.8.53/24 up | |

| route add default gw 192.168.8.1 dev eth0:0 | |

| echo 'nameserver 8.8.8.8' >> /etc/resolv.conf |

添加源

- 添加 Grizzly 源,并升级系统:

| echo 'deb http://Ubuntu-cloud.archive.canonical.com/ubuntu precise-updates/grizzly main' > /etc/apt/sources.list.d/grizzly.list | |

| apt-get update | |

| apt-get upgrade | |

| apt-get install ubuntu-cloud-keyring |

- 设置 ntp 和开启路由转发:

| # apt-get install ntp | |

| # sed -i 's/server ntp.ubuntu.com/server 172.16.0.51/g' /etc/ntp.conf | |

| # service ntp restart | |

| # vim /etc/sysctl.conf | |

| net.ipv4.ip_forward=1 | |

| # sysctl -p |

OpenVSwitch

- 安装 openVSwitch:

apt-get install openvswitch-switch openvswitch-brcompat

- 设置 ovs-brcompatd 启动:

| sed -i 's/# BRCOMPAT=no/BRCOMPAT=yes/g' /etc/default/openvswitch-switch | |

| echo 'brcompat' >> /etc/module |

- 启动 openvswitch-switch:

| /etc/init.d/openvswitch-switch restart | |

| * ovs-brcompatd is not running # brcompatd 没有启动, 尝试再次启动. | |

| * ovs-vswitchd is not running | |

| * ovsdb-server is not running | |

| * Inserting openvswitch module | |

| * /etc/openvswitch/conf.db does not exist | |

| * Creating empty database /etc/openvswitch/conf.db | |

| * Starting ovsdb-server | |

| * Configuring Open vSwitch system IDs | |

| * Starting ovs-vswitchd | |

| * Enabling gre with iptables |

- 再次启动, 直到 ovs-brcompatd、ovs-vswitchd、ovsdb-server 等服务都启动:

| # /etc/init.d/openvswitch-switch restart | |

| # lsmod | grep brcompat | |

| brcompat 13512 0 | |

| openvswitch 84038 7 brcompat |

- 如果还是启动不了 brcompat,执行下面命令:

/etc/init.d/openvswitch-switch force-reload-kmod

- 创建 br-int 网桥:

ovs-vsctl add-br br-int

Quantum

- 安装 Quantum openvswitch agent:

apt-get install quantum-plugin-openvswitch-agent

- 编辑 OVS 插件配置文件 /etc/quantum/plugins/openvswitch/ovs_quantum_plugin.ini:

| [DATABASE] | |

| sql_connection = mysql://quantum:quantum@172.16.0.51/quantum | |

| reconnect_interval = 2 | |

| [OVS] | |

| enable_tunneling = True | |

| tenant_network_type = gre | |

| tunnel_id_ranges = 1:1000 | |

| local_ip = 10.10.10.53 | |

| integration_bridge = br-int | |

| tunnel_bridge = br-tun | |

| [AGENT] | |

| polling_interval = 2 | |

| [SECURITYGROUP] |

- 编辑 /etc/quantum/quantum.conf:

| [DEFAULT] | |

| debug = True | |

| verbose = True | |

| state_path = /var/lib/quantum | |

| lock_path = $state_path/lock | |

| bind_host = 0.0.0.0 | |

| bind_port = 9696 | |

| core_plugin = quantum.plugins.openvswitch.ovs_quantum_plugin.OVSQuantumPluginV2 | |

| api_paste_config = /etc/quantum/api-paste.ini | |

| control_exchange = quantum | |

| rabbit_host = 172.16.0.51 | |

| rabbit_password = guest | |

| rabbit_port = 5672 | |

| rabbit_userid = guest | |

| notification_driver = quantum.openstack.common.notifier.rpc_notifier | |

| default_notification_level = INFO | |

| notification_topics = notifications | |

| [QUOTAS] | |

| [DEFAULT_SERVICETYPE] | |

| [AGENT] | |

| root_helper = sudo quantum-rootwrap /etc/quantum/rootwrap.conf | |

| [keystone_authtoken] | |

| auth_host = 172.16.0.51 | |

| auth_port = 35357 | |

| auth_protocol = http | |

| admin_tenant_name = service | |

| admin_user = quantum | |

| admin_password = password | |

| signing_dir = /var/lib/quantum/keystone-signin |

- 启动服务:

service quantum-plugin-openvswitch-agent restart

Nova

- 安装 nova-compute:

apt-get install nova-compute

- 在 /etc/nova/api-paste.ini 中修改 autotoken 验证部分:

| [filter:authtoken] | |

| paste.filter_factory = keystoneclient.middleware.auth_token:filter_factory | |

| auth_host = 172.16.0.51 | |

| auth_port = 35357 | |

| auth_protocol = http | |

| admin_tenant_name = service | |

| admin_user = nova | |

| admin_password = password | |

| signing_dir = /tmp/keystone-signing-nova | |

| # Workaround for https://bugs.launchpad.net/nova/+bug/1154809 | |

| auth_version = v2.0 |

- 修改 /etc/nova/nova.conf,类似下面这样:

| [DEFAULT] | |

| # LOGS/STATE | |

| debug = False | |

| verbose = True | |

| logdir = /var/log/nova | |

| state_path = /var/lib/nova | |

| lock_path = /var/lock/nova | |

| rootwrap_config = /etc/nova/rootwrap.conf | |

| dhcpbridge = /usr/bin/nova-dhcpbridge | |

| # SCHEDULER | |

| compute_scheduler_driver = nova.scheduler.filter_scheduler.FilterScheduler | |

| ## VOLUMES | |

| volume_api_class = nova.volume.cinder.API | |

| osapi_volume_listen_port=5900 | |

| # DATABASE | |

| sql_connection = mysql://nova:nova@172.16.0.51/nova | |

| # COMPUTE | |

| libvirt_type = kvm | |

| compute_driver = libvirt.LibvirtDriver | |

| instance_name_template = instance-%08x | |

| api_paste_config = /etc/nova/api-paste.ini | |

| # COMPUTE/APIS: if you have separate configs for separate services | |

| # this flag is required for both nova-api and nova-compute | |

| allow_resize_to_same_host = True | |

| # APIS | |

| osapi_compute_extension = nova.api.openstack.compute.contrib.standard_extensions | |

| ec2_dmz_host = 172.16.0.51 | |

| s3_host = 172.16.0.51 | |

| metadata_host=172.16.0.51 | |

| metadata_listen=0.0.0.0 | |

| # RABBITMQ | |

| rabbit_host = 172.16.0.51 | |

| rabbit_password = guest | |

| # GLANCE | |

| image_service = nova.image.glance.GlanceImageService | |

| glance_api_servers = 172.16.0.51:9292 | |

| # NETWORK | |

| network_api_class = nova.network.quantumv2.api.API | |

| quantum_url = http://172.16.0.51:9696 | |

| quantum_auth_strategy = keystone | |

| quantum_admin_tenant_name = service | |

| quantum_admin_username = quantum | |

| quantum_admin_password = password | |

| quantum_admin_auth_url = http://172.16.0.51:35357/v2.0 | |

| libvirt_vif_driver = nova.virt.libvirt.vif.LibvirtHybridOVSBridgeDriver | |

| linuxnet_interface_driver = nova.network.linux_net.LinuxOVSInterfaceDriver | |

| firewall_driver = nova.virt.libvirt.firewall.IptablesFirewallDriver | |

| # NOVNC CONSOLE | |

| novncproxy_base_url = http://192.168.8.51:6080/vnc_auto.html | |

| # Change vncserver_proxyclient_address and vncserver_listen to match each compute host | |

| vncserver_proxyclient_address = 172.16.0.53 | |

| vncserver_listen = 0.0.0.0 | |

| # AUTHENTICATION | |

| auth_strategy = keystone | |

| [keystone_authtoken] | |

| auth_host = 172.16.0.51 | |

| auth_port = 35357 | |

| auth_protocol = http | |

| admin_tenant_name = service | |

| admin_user = nova | |

| admin_password = password | |

| signing_dir = /tmp/keystone-signing-nova |

- 启动 nova-compute 服务:

service nova-compute restart

- 检查 nova 相关服务笑脸:

发现 compute 节点已经加入:

| nova-manage service list | |

| Binary Host Zone Status State Updated_At | |

| nova-consoleauth control internal enabled |

| 2013-03-31 11:38:32 | |

| nova-cert control internal enabled |

| 2013-03-31 11:38:31 | |

| nova-scheduler control internal enabled |

| 2013-03-31 11:38:31 | |

| nova-conductor control internal enabled |

| 2013-03-31 11:38:27 | |

| nova-compute compute1 nova enabled |

2013-03-31 11:38:26

开始创建 vm

创建 quantum 网络和虚拟机。