共计 24387 个字符,预计需要花费 61 分钟才能阅读完成。

软件环境

| 软件 | 版本 |

|---|---|

| 操作系统 | CentOS 7.4 |

| Docker | 18-ce |

| Kubernetes | 1.12 |

服务器角色

| 角色 | IP | 组件 |

|---|---|---|

| k8s-master | 192.168.0.205 | kube-apiserver, kuber-controller-manager, kube-scheduler, etcd |

| k8s-node1 | 192.168.0.206 | kube-let, kuber-proxy, docker, flannel, etcd |

| k8s-node2 | 192.168.0.207 | kube-let, kuber-proxy, docker, flannel, etcd |

架构图

在 master 上安装 ansible 管理集群

| yum install ansible -y | |

| # 配置 ansible | |

| cat > /etc/ansible/hosts <<EOF | |

| [myhost] | |

| 192.168.0.205 | |

| [node] | |

| 192.168.0.206 | |

| 192.168.0.207 | |

| EOF | |

| # 生成无密码公私钥 | |

| cd ~ | |

| ssh-keygen -t rsa | |

| # 复制到对应的主机 | |

| ssh-copy-id -i ~/.ssh/id_rsa.pub root@192.168.0.205 | |

| ssh-copy-id -i ~/.ssh/id_rsa.pub root@192.168.0.206 | |

| ssh-copy-id -i ~/.ssh/id_rsa.pub root@192.168.0.207 | |

| # 测试 ansible | |

| ansible all -m ping |

开放防火墙

| ansible all -m shell -a 'firewall-cmd --permanent --add-rich-rule="rule family="ipv4" source address="192.168.0.0/16" accept"' | |

| ansible all -m shell -a 'firewall-cmd --reload' |

生成证书

在 k8s-master 上执行

使用 cfssl 来生成自签证书,先下载 cfssl 工具:

| mkdir /iba/tools -p | |

| cd /iba/tools | |

| wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 | |

| wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 | |

| wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64 | |

| chmod +x cfssl_linux-amd64 cfssljson_linux-amd64 cfssl-certinfo_linux-amd64 | |

| mv cfssl_linux-amd64 /usr/local/bin/cfssl | |

| mv cfssljson_linux-amd64 /usr/local/bin/cfssljson | |

| mv cfssl-certinfo_linux-amd64 /usr/bin/cfssl-certinfo |

创建以下三个文件

| cat > ca-config.json <<EOF | |

| {"signing": {"default": {"expiry": "87600h" | |

| }, | |

| "profiles": {"www": {"expiry": "87600h", | |

| "usages": ["signing", | |

| "key encipherment", | |

| "server auth", | |

| "client auth" | |

| ] | |

| } | |

| } | |

| } | |

| } | |

| EOF |

ca-config.json:可以定义多个 profiles,分别指定不同的过期时间、使用场景等参数;后续在签名证书时使用某个 profile;

signing:表示该证书可用于签名其它证书;生成的 ca.pem 证书中 CA=TRUE;

server auth:表示 client 可以用该 CA 对 server 提供的证书进行验证;

client auth:表示 server 可以用该 CA 对 client 提供的证书进行验证;

| cat > ca-csr.json <<EOF | |

| {"CN": "etcd CA", | |

| "key": {"algo": "rsa", | |

| "size": 2048 | |

| }, | |

| "names": [ | |

| {"C": "CN", | |

| "L": "Beijing", | |

| "ST": "Beijing" | |

| } | |

| ] | |

| } | |

| EOF |

“CN”:Common Name,组件从证书中提取该字段作为请求的用户名 (User Name);浏览器使用该字段验证网站是否合法;

| cat > server-csr.json <<EOF | |

| {"CN": "etcd", | |

| "hosts": ["192.168.0.205", | |

| "192.168.0.206", | |

| "192.168.0.207" | |

| ], | |

| "key": {"algo": "rsa", | |

| "size": 2048 | |

| }, | |

| "names": [ | |

| {"C": "CN", | |

| "L": "BeiJing", | |

| "ST": "BeiJing" | |

| } | |

| ] | |

| } | |

| EOF |

生成证书

| cfssl gencert -initca ca-csr.json | cfssljson -bare ca - | |

| # 生成了 ca.pem ca-key.pem ca.csr |

ca.pem,ca-key.pem,ca.csr 组成了一个自签名的 CA 机构

| 证书名称 | 作用 |

|---|---|

| ca.pem | CA 根证书文件 |

| ca-key.pem | 服务端私钥,用于对客户端请求的解密和签名 |

| ca.csr | 证书签名请求,用于交叉签名或重新签名 |

| cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare server | |

| # 生成了 server.csr server-key.pem server.pem |

部署 Etcd

| cd /iba/tools | |

| wget https://github.com/etcd-io/etcd/releases/download/v3.2.12/etcd-v3.2.12-linux-amd64.tar.gz | |

| mkdir /opt/etcd/{bin,cfg,ssl} -p | |

| tar zxf etcd-v3.2.12-linux-amd64.tar.gz | |

| mv etcd-v3.2.12-linux-amd64/{etcd,etcdctl} /opt/etcd/bin/ |

创建 etcd 配置文件:

| cat > /opt/etcd/cfg/etcd <<EOF | |

| #[Member] | |

| ETCD_NAME="etcd01" | |

| ETCD_DATA_DIR="/var/lib/etcd/default.etcd" | |

| ETCD_LISTEN_PEER_URLS="https://192.168.0.205:2380" | |

| ETCD_LISTEN_CLIENT_URLS="https://192.168.0.205:2379" | |

| #[Clustering] | |

| ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.0.205:2380" | |

| ETCD_ADVERTISE_CLIENT_URLS="https://192.168.0.205:2379" | |

| ETCD_INITIAL_CLUSTER="etcd01=https://192.168.0.205:2380,etcd02=https://192.168.0.206:2380,etcd03=https://192.168.0.207:2380" | |

| ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster" | |

| ETCD_INITIAL_CLUSTER_STATE="new" | |

| EOF |

备注

| ETCD_NAME # 节点名称 | |

| ETCD_DATA_DIR # 数据目录 | |

| ETCD_LISTEN_PEER_URLS # 集群通信监听地址 | |

| ETCD_LISTEN_CLIENT_URLS # 客户端访问监听地址 | |

| ETCD_INITIAL_ADVERTISE_PEER_URLS # 集群通告地址 | |

| ETCD_ADVERTISE_CLIENT_URLS # 客户端通告地址 | |

| ETCD_INITIAL_CLUSTER # 集群节点地址 | |

| ETCD_INITIAL_CLUSTER_TOKEN # 集群 Token | |

| ETCD_INITIAL_CLUSTER_STATE # 加入集群的当前状态,new 是新集群,existing 表示加入已有 |

systemd 管理 etcd:

| cat > /usr/lib/systemd/system/etcd.service <<-'EOF' | |

| [Unit] | |

| Description=Etcd Server | |

| After=network.target | |

| After=network-online.target | |

| Wants=network-online.target | |

| [Service] | |

| Type=notify | |

| EnvironmentFile=/opt/etcd/cfg/etcd | |

| ExecStart=/opt/etcd/bin/etcd \ | |

| --name=${ETCD_NAME} \ | |

| --data-dir=${ETCD_DATA_DIR} \ | |

| --listen-peer-urls=${ETCD_LISTEN_PEER_URLS} \ | |

| --listen-client-urls=${ETCD_LISTEN_CLIENT_URLS},http://127.0.0.1:2379 \ | |

| --advertise-client-urls=${ETCD_ADVERTISE_CLIENT_URLS} \ | |

| --initial-advertise-peer-urls=${ETCD_INITIAL_ADVERTISE_PEER_URLS} \ | |

| --initial-cluster=${ETCD_INITIAL_CLUSTER} \ | |

| --initial-cluster-token=${ETCD_INITIAL_CLUSTER_TOKEN} \ | |

| --cert-file=/opt/etcd/ssl/server.pem \ | |

| --key-file=/opt/etcd/ssl/server-key.pem \ | |

| --peer-cert-file=/opt/etcd/ssl/server.pem \ | |

| --peer-key-file=/opt/etcd/ssl/server-key.pem \ | |

| --trusted-ca-file=/opt/etcd/ssl/ca.pem \ | |

| --peer-trusted-ca-file=/opt/etcd/ssl/ca.pem | |

| Restart=on-failure | |

| LimitNOFILE=65536 | |

| [Install] | |

| WantedBy=multi-user.target | |

| EOF |

把刚才生成的证书拷贝到配置文件中的位置:

| cd /iba/tools | |

| cp ca*pem server*pem /opt/etcd/ssl/ |

把二进制文件和配置文件复制到 nodes 节点上

| ansible node -m shell -a 'mkdir /opt/etcd/{bin,cfg,ssl} -p' | |

| cd /opt/etcd/bin/ | |

| ansible node -m copy -a 'src=etcd dest=/opt/etcd/bin/' | |

| ansible node -m copy -a 'src=etcdctl dest=/opt/etcd/bin/' | |

| ansible node -m shell -a 'chmod +x /opt/etcd/bin/etcd' | |

| ansible node -m shell -a 'chmod +x /opt/etcd/bin/etcdctl' | |

| cd /opt/etcd/ssl/ | |

| ansible node -m copy -a 'src=ca-key.pem dest=/opt/etcd/ssl/' | |

| ansible node -m copy -a 'src=ca.pem dest=/opt/etcd/ssl/' | |

| ansible node -m copy -a 'src=server-key.pem dest=/opt/etcd/ssl/' | |

| ansible node -m copy -a 'src=server.pem dest=/opt/etcd/ssl/' | |

| ansible node -m shell -a 'ls /opt/etcd/ssl/' | |

| ansible node -m copy -a 'src=/opt/etcd/cfg/etcd dest=/opt/etcd/cfg/' | |

| ansible node -m copy -a 'src=/usr/lib/systemd/system/etcd.service dest=/usr/lib/systemd/system/' |

修改 node1,node2 上面的 /opt/etcd/cfg/etcd 为对应的值

| ETCD_NAME # 修改名称 | |

| ETCD_LISTEN_PEER_URLS # 修改 IP | |

| ETCD_LISTEN_CLIENT_URLS # 修改 IP | |

| ETCD_INITIAL_ADVERTISE_PEER_URLS # 修改 IP | |

| ETCD_ADVERTISE_CLIENT_URLS # 修改 IP |

启动 etcd

| ansible node -m shell -a 'systemctl enable etcd' | |

| ansible node -m shell -a 'systemctl start etcd' |

检查 etcd 集群状态

| cd /opt/etcd/ssl | |

| /opt/etcd/bin/etcdctl \ | |

| --ca-file=ca.pem --cert-file=server.pem --key-file=server-key.pem \ | |

| --endpoints="https://192.168.0.205:2379,https://192.168.0.206:2379,https://192.168.0.207:2379" \ | |

| cluster-health |

更多详情见请继续阅读下一页的精彩内容 :https://www.linuxidc.com/Linux/2019-01/156518p2.htm

在 node 节点上安装 docker

参考 https://www.linuxidc.com/Linux/2019-01/156519.htm

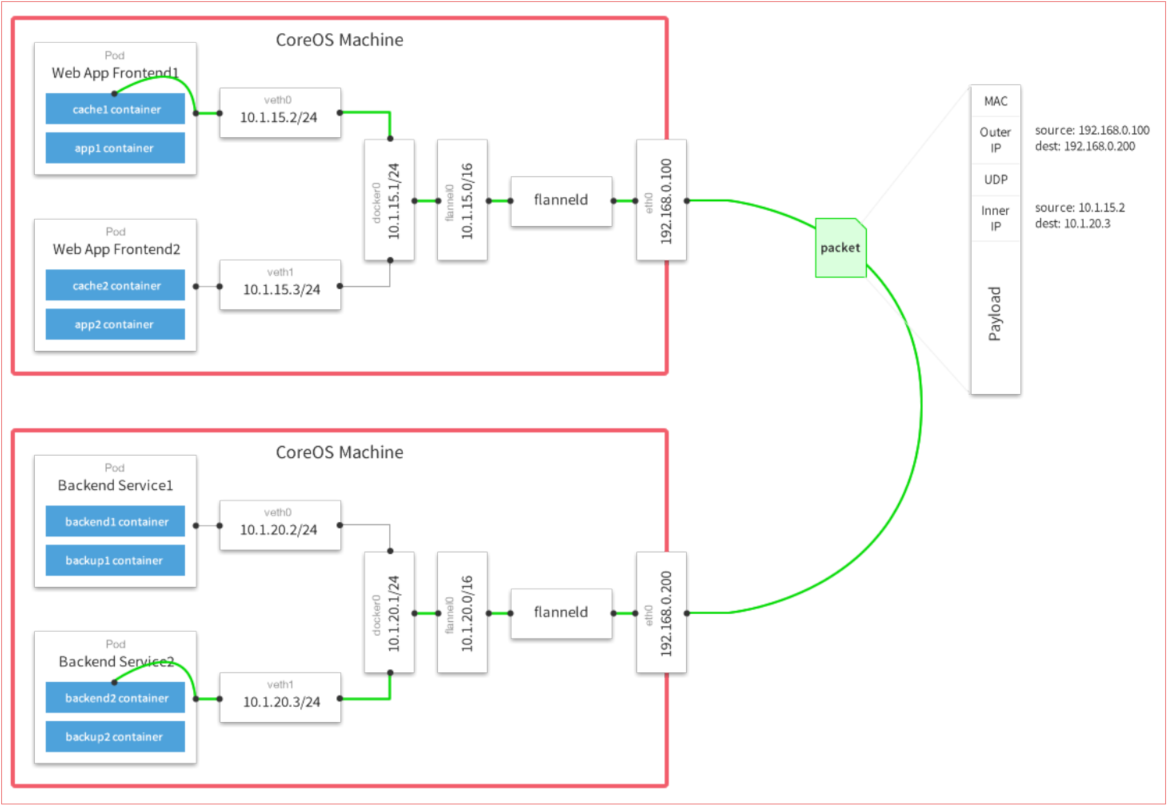

Flannel 工作原理:

部署 Flannel 网络

在 master 上执行

| # Falnnel 要用 etcd 存储自身一个子网信息,所以要保证能成功连接 Etcd,写入预定义子网段: | |

| cd /opt/etcd/ssl | |

| /opt/etcd/bin/etcdctl --ca-file=ca.pem --cert-file=server.pem --key-file=server-key.pem --endpoints="https://192.168.0.205:2379,https://192.168.0.206:2379,https://192.168.0.207:2379" set /coreos.com/network/config '{"Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}' |

下载 flannel-v0.10.0-linux-amd64.tar.gz

| ansible node -m file -a 'path=/iba/tools state=directory' | |

| ansible node -m command -a 'wget -O /iba/tools/flannel-v0.10.0-linux-amd64.tar.gz https://github.com/coreos/flannel/releases/download/v0.10.0/flannel-v0.10.0-linux-amd64.tar.gz' | |

| ansible node -m file -a 'path=/opt/kubernetes/bin state=directory' | |

| ansible node -m shell -a 'tar zxf /iba/tools/flannel-v0.10.0-linux-amd64.tar.gz -C /opt/kubernetes/bin/' |

systemd 管理 Flannel

| mkdir /home/config && cd /home/config | |

| cat > flanneld.service <<-'EOF' | |

| [Unit] | |

| Description=Flanneld overlay address etcd agent | |

| After=network-online.target network.target | |

| Before=docker.service | |

| [Service] | |

| Type=notify | |

| EnvironmentFile=/opt/kubernetes/cfg/flanneld | |

| ExecStart=/opt/kubernetes/bin/flanneld --ip-masq $FLANNEL_OPTIONS | |

| ExecStartPost=/opt/kubernetes/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/subnet.env | |

| Restart=on-failure | |

| [Install] | |

| WantedBy=multi-user.target | |

| EOF | |

| ansible node -m copy -a 'src=flanneld.service dest=/usr/lib/systemd/system/flanneld.service' |

配置 Flannel

| ansible node -m file -a 'path=/opt/kubernetes/cfg state=directory' | |

| cat > flanneld << EOF | |

| FLANNEL_OPTIONS="--etcd-endpoints=https://192.168.0.205:2379,https://192.168.0.206:2379,https://192.168.0.207:2379 -etcd-cafile=/opt/etcd/ssl/ca.pem -etcd-certfile=/opt/etcd/ssl/server.pem -etcd-keyfile=/opt/etcd/ssl/server-key.pem" | |

| EOF | |

| ansible node -m copy -a 'src=flanneld dest=/opt/kubernetes/cfg/flanneld' |

配置 Docker 启动指定子网段

| # 在 node 上执行 | |

| vi /usr/lib/systemd/system/docker.service | |

| # 在 for containers run by docker 下面添加,修改两行 | |

| EnvironmentFile=/run/flannel/subnet.env | |

| ExecStart=/usr/bin/dockerd $DOCKER_NETWORK_OPTIONS -H unix:// |

启动 flannel 和 重启 docker

| # 在 master 上执行 | |

| ansible node -m shell -a 'systemctl daemon-reload' | |

| ansible node -m shell -a 'systemctl start flanneld' | |

| ansible node -m shell -a 'systemctl status flanneld.service' | |

| ansible node -m shell -a 'systemctl restart docker' |

检查 docker 有没有在指定的 ip 下启动

ansible node -m shell -a 'ps -ef|grep docker'

检查 docker0 与 flannel.1 在同一个网段

ansible node -m shell -a 'ip add'

软件环境

| 软件 | 版本 |

|---|---|

| 操作系统 | CentOS 7.4 |

| Docker | 18-ce |

| Kubernetes | 1.12 |

服务器角色

| 角色 | IP | 组件 |

|---|---|---|

| k8s-master | 192.168.0.205 | kube-apiserver, kuber-controller-manager, kube-scheduler, etcd |

| k8s-node1 | 192.168.0.206 | kube-let, kuber-proxy, docker, flannel, etcd |

| k8s-node2 | 192.168.0.207 | kube-let, kuber-proxy, docker, flannel, etcd |

架构图

在 master 上安装 ansible 管理集群

| yum install ansible -y | |

| # 配置 ansible | |

| cat > /etc/ansible/hosts <<EOF | |

| [myhost] | |

| 192.168.0.205 | |

| [node] | |

| 192.168.0.206 | |

| 192.168.0.207 | |

| EOF | |

| # 生成无密码公私钥 | |

| cd ~ | |

| ssh-keygen -t rsa | |

| # 复制到对应的主机 | |

| ssh-copy-id -i ~/.ssh/id_rsa.pub root@192.168.0.205 | |

| ssh-copy-id -i ~/.ssh/id_rsa.pub root@192.168.0.206 | |

| ssh-copy-id -i ~/.ssh/id_rsa.pub root@192.168.0.207 | |

| # 测试 ansible | |

| ansible all -m ping |

开放防火墙

| ansible all -m shell -a 'firewall-cmd --permanent --add-rich-rule="rule family="ipv4" source address="192.168.0.0/16" accept"' | |

| ansible all -m shell -a 'firewall-cmd --reload' |

生成证书

在 k8s-master 上执行

使用 cfssl 来生成自签证书,先下载 cfssl 工具:

| mkdir /iba/tools -p | |

| cd /iba/tools | |

| wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 | |

| wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 | |

| wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64 | |

| chmod +x cfssl_linux-amd64 cfssljson_linux-amd64 cfssl-certinfo_linux-amd64 | |

| mv cfssl_linux-amd64 /usr/local/bin/cfssl | |

| mv cfssljson_linux-amd64 /usr/local/bin/cfssljson | |

| mv cfssl-certinfo_linux-amd64 /usr/bin/cfssl-certinfo |

创建以下三个文件

| cat > ca-config.json <<EOF | |

| {"signing": {"default": {"expiry": "87600h" | |

| }, | |

| "profiles": {"www": {"expiry": "87600h", | |

| "usages": ["signing", | |

| "key encipherment", | |

| "server auth", | |

| "client auth" | |

| ] | |

| } | |

| } | |

| } | |

| } | |

| EOF |

ca-config.json:可以定义多个 profiles,分别指定不同的过期时间、使用场景等参数;后续在签名证书时使用某个 profile;

signing:表示该证书可用于签名其它证书;生成的 ca.pem 证书中 CA=TRUE;

server auth:表示 client 可以用该 CA 对 server 提供的证书进行验证;

client auth:表示 server 可以用该 CA 对 client 提供的证书进行验证;

| cat > ca-csr.json <<EOF | |

| {"CN": "etcd CA", | |

| "key": {"algo": "rsa", | |

| "size": 2048 | |

| }, | |

| "names": [ | |

| {"C": "CN", | |

| "L": "Beijing", | |

| "ST": "Beijing" | |

| } | |

| ] | |

| } | |

| EOF |

“CN”:Common Name,组件从证书中提取该字段作为请求的用户名 (User Name);浏览器使用该字段验证网站是否合法;

| cat > server-csr.json <<EOF | |

| {"CN": "etcd", | |

| "hosts": ["192.168.0.205", | |

| "192.168.0.206", | |

| "192.168.0.207" | |

| ], | |

| "key": {"algo": "rsa", | |

| "size": 2048 | |

| }, | |

| "names": [ | |

| {"C": "CN", | |

| "L": "BeiJing", | |

| "ST": "BeiJing" | |

| } | |

| ] | |

| } | |

| EOF |

生成证书

| cfssl gencert -initca ca-csr.json | cfssljson -bare ca - | |

| # 生成了 ca.pem ca-key.pem ca.csr |

ca.pem,ca-key.pem,ca.csr 组成了一个自签名的 CA 机构

| 证书名称 | 作用 |

|---|---|

| ca.pem | CA 根证书文件 |

| ca-key.pem | 服务端私钥,用于对客户端请求的解密和签名 |

| ca.csr | 证书签名请求,用于交叉签名或重新签名 |

| cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare server | |

| # 生成了 server.csr server-key.pem server.pem |

部署 Etcd

| cd /iba/tools | |

| wget https://github.com/etcd-io/etcd/releases/download/v3.2.12/etcd-v3.2.12-linux-amd64.tar.gz | |

| mkdir /opt/etcd/{bin,cfg,ssl} -p | |

| tar zxf etcd-v3.2.12-linux-amd64.tar.gz | |

| mv etcd-v3.2.12-linux-amd64/{etcd,etcdctl} /opt/etcd/bin/ |

创建 etcd 配置文件:

| cat > /opt/etcd/cfg/etcd <<EOF | |

| #[Member] | |

| ETCD_NAME="etcd01" | |

| ETCD_DATA_DIR="/var/lib/etcd/default.etcd" | |

| ETCD_LISTEN_PEER_URLS="https://192.168.0.205:2380" | |

| ETCD_LISTEN_CLIENT_URLS="https://192.168.0.205:2379" | |

| #[Clustering] | |

| ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.0.205:2380" | |

| ETCD_ADVERTISE_CLIENT_URLS="https://192.168.0.205:2379" | |

| ETCD_INITIAL_CLUSTER="etcd01=https://192.168.0.205:2380,etcd02=https://192.168.0.206:2380,etcd03=https://192.168.0.207:2380" | |

| ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster" | |

| ETCD_INITIAL_CLUSTER_STATE="new" | |

| EOF |

备注

| ETCD_NAME # 节点名称 | |

| ETCD_DATA_DIR # 数据目录 | |

| ETCD_LISTEN_PEER_URLS # 集群通信监听地址 | |

| ETCD_LISTEN_CLIENT_URLS # 客户端访问监听地址 | |

| ETCD_INITIAL_ADVERTISE_PEER_URLS # 集群通告地址 | |

| ETCD_ADVERTISE_CLIENT_URLS # 客户端通告地址 | |

| ETCD_INITIAL_CLUSTER # 集群节点地址 | |

| ETCD_INITIAL_CLUSTER_TOKEN # 集群 Token | |

| ETCD_INITIAL_CLUSTER_STATE # 加入集群的当前状态,new 是新集群,existing 表示加入已有 |

systemd 管理 etcd:

| cat > /usr/lib/systemd/system/etcd.service <<-'EOF' | |

| [Unit] | |

| Description=Etcd Server | |

| After=network.target | |

| After=network-online.target | |

| Wants=network-online.target | |

| [Service] | |

| Type=notify | |

| EnvironmentFile=/opt/etcd/cfg/etcd | |

| ExecStart=/opt/etcd/bin/etcd \ | |

| --name=${ETCD_NAME} \ | |

| --data-dir=${ETCD_DATA_DIR} \ | |

| --listen-peer-urls=${ETCD_LISTEN_PEER_URLS} \ | |

| --listen-client-urls=${ETCD_LISTEN_CLIENT_URLS},http://127.0.0.1:2379 \ | |

| --advertise-client-urls=${ETCD_ADVERTISE_CLIENT_URLS} \ | |

| --initial-advertise-peer-urls=${ETCD_INITIAL_ADVERTISE_PEER_URLS} \ | |

| --initial-cluster=${ETCD_INITIAL_CLUSTER} \ | |

| --initial-cluster-token=${ETCD_INITIAL_CLUSTER_TOKEN} \ | |

| --cert-file=/opt/etcd/ssl/server.pem \ | |

| --key-file=/opt/etcd/ssl/server-key.pem \ | |

| --peer-cert-file=/opt/etcd/ssl/server.pem \ | |

| --peer-key-file=/opt/etcd/ssl/server-key.pem \ | |

| --trusted-ca-file=/opt/etcd/ssl/ca.pem \ | |

| --peer-trusted-ca-file=/opt/etcd/ssl/ca.pem | |

| Restart=on-failure | |

| LimitNOFILE=65536 | |

| [Install] | |

| WantedBy=multi-user.target | |

| EOF |

把刚才生成的证书拷贝到配置文件中的位置:

| cd /iba/tools | |

| cp ca*pem server*pem /opt/etcd/ssl/ |

把二进制文件和配置文件复制到 nodes 节点上

| ansible node -m shell -a 'mkdir /opt/etcd/{bin,cfg,ssl} -p' | |

| cd /opt/etcd/bin/ | |

| ansible node -m copy -a 'src=etcd dest=/opt/etcd/bin/' | |

| ansible node -m copy -a 'src=etcdctl dest=/opt/etcd/bin/' | |

| ansible node -m shell -a 'chmod +x /opt/etcd/bin/etcd' | |

| ansible node -m shell -a 'chmod +x /opt/etcd/bin/etcdctl' | |

| cd /opt/etcd/ssl/ | |

| ansible node -m copy -a 'src=ca-key.pem dest=/opt/etcd/ssl/' | |

| ansible node -m copy -a 'src=ca.pem dest=/opt/etcd/ssl/' | |

| ansible node -m copy -a 'src=server-key.pem dest=/opt/etcd/ssl/' | |

| ansible node -m copy -a 'src=server.pem dest=/opt/etcd/ssl/' | |

| ansible node -m shell -a 'ls /opt/etcd/ssl/' | |

| ansible node -m copy -a 'src=/opt/etcd/cfg/etcd dest=/opt/etcd/cfg/' | |

| ansible node -m copy -a 'src=/usr/lib/systemd/system/etcd.service dest=/usr/lib/systemd/system/' |

修改 node1,node2 上面的 /opt/etcd/cfg/etcd 为对应的值

| ETCD_NAME # 修改名称 | |

| ETCD_LISTEN_PEER_URLS # 修改 IP | |

| ETCD_LISTEN_CLIENT_URLS # 修改 IP | |

| ETCD_INITIAL_ADVERTISE_PEER_URLS # 修改 IP | |

| ETCD_ADVERTISE_CLIENT_URLS # 修改 IP |

启动 etcd

| ansible node -m shell -a 'systemctl enable etcd' | |

| ansible node -m shell -a 'systemctl start etcd' |

检查 etcd 集群状态

| cd /opt/etcd/ssl | |

| /opt/etcd/bin/etcdctl \ | |

| --ca-file=ca.pem --cert-file=server.pem --key-file=server-key.pem \ | |

| --endpoints="https://192.168.0.205:2379,https://192.168.0.206:2379,https://192.168.0.207:2379" \ | |

| cluster-health |

更多详情见请继续阅读下一页的精彩内容 :https://www.linuxidc.com/Linux/2019-01/156518p2.htm

在 Master 节点部署组件

在部署 Kubernetes 之前一定要确保 etcd、flannel、docker 是正常工作的,否则先解决问题再继续。

创建 CA 证书

| mkdir -p /iba/master-ca | |

| cd /iba/master-ca | |

| cat > ca-config.json << EOF | |

| {"signing": {"default": {"expiry": "87600h" | |

| }, | |

| "profiles": {"kubernetes": {"expiry": "87600h", | |

| "usages": ["signing", | |

| "key encipherment", | |

| "server auth", | |

| "client auth" | |

| ] | |

| } | |

| } | |

| } | |

| } | |

| EOF | |

| cat > ca-csr.json << EOF | |

| {"CN": "kubernetes", | |

| "key": {"algo": "rsa", | |

| "size": 2048 | |

| }, | |

| "names": [ | |

| {"C": "CN", | |

| "L": "Beijing", | |

| "ST": "Beijing", | |

| "O": "k8s", | |

| "OU": "System" | |

| } | |

| ] | |

| } | |

| EOF | |

| cfssl gencert -initca ca-csr.json | cfssljson -bare ca - | |

| # 生成了 ca.csr ca-key.pem ca.pem |

生成 apiserver 证书:

| cat > server-csr.json << EOF | |

| {"CN": "kubernetes", | |

| "hosts": ["10.0.0.1", | |

| "127.0.0.1", | |

| "192.168.0.205", | |

| "kubernetes", | |

| "kubernetes.default", | |

| "kubernetes.default.svc", | |

| "kubernetes.default.svc.cluster", | |

| "kubernetes.default.svc.cluster.local" | |

| ], | |

| "key": {"algo": "rsa", | |

| "size": 2048 | |

| }, | |

| "names": [ | |

| {"C": "CN", | |

| "L": "BeiJing", | |

| "ST": "BeiJing", | |

| "O": "k8s", | |

| "OU": "System" | |

| } | |

| ] | |

| } | |

| EOF | |

| cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes server-csr.json | cfssljson -bare server | |

| # 生成了 server.pem,server-key.pem,server.csr |

生成 kube-proxy 证书:

| cat > kube-proxy-csr.json << EOF | |

| {"CN": "system:kube-proxy", | |

| "hosts": [], | |

| "key": {"algo": "rsa", | |

| "size": 2048 | |

| }, | |

| "names": [ | |

| {"C": "CN", | |

| "L": "Beijing", | |

| "ST": "Beijing", | |

| "O": "k8s", | |

| "OU": "System" | |

| } | |

| ] | |

| } | |

| EOF | |

| cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy | |

| # 生成了 kube-proxy.pem, kube-proxy-key.pem, kube-proxy.csr |

部署 apiserver 组件

| mkdir /opt/kubernetes/{bin,cfg,ssl} -p | |

| cd /iba/tools | |

| wget https://dl.k8s.io/v1.12.4/kubernetes-server-linux-amd64.tar.gz | |

| tar zxvf kubernetes-server-linux-amd64.tar.gz | |

| cd kubernetes/server/bin/ | |

| cp kube-apiserver kube-scheduler kube-controller-manager kubectl /opt/kubernetes/bin/ | |

| # 创建 token 文件 | |

| cd /opt/kubernetes/cfg/ | |

| cat > token.csv<< EOF | |

| 674c457d4dcf2eefe4920d7dbb6b0ddc,kubelet-bootstrap,10001,"system:kubelet-bootstrap" | |

| EOF | |

| # token 文件说明 -- 第一列:随机字符串,自己可生成;第二列:用户名;第三列:UID;第四列:用户组 |

创建 apiserver 配置文件

| cat > /opt/kubernetes/cfg/kube-apiserver << EOF | |

| KUBE_APISERVER_OPTS="--logtostderr=true --v=4 \ | |

| --etcd-servers=https://192.168.0.205:2379,https://192.168.0.206:2379,https://192.168.0.207:2379 \ | |

| --bind-address=192.168.0.205 \ | |

| --secure-port=6443 \ | |

| --advertise-address=192.168.0.205 \ | |

| --allow-privileged=true \ | |

| --service-cluster-ip-range=10.0.0.0/24 \ | |

| --enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction \ | |

| --authorization-mode=RBAC,Node \ | |

| --enable-bootstrap-token-auth \ | |

| --token-auth-file=/opt/kubernetes/cfg/token.csv \ | |

| --service-node-port-range=30000-50000 \ | |

| --tls-cert-file=/opt/kubernetes/ssl/server.pem \ | |

| --tls-private-key-file=/opt/kubernetes/ssl/server-key.pem \ | |

| --client-ca-file=/opt/kubernetes/ssl/ca.pem \ | |

| --service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \ | |

| --etcd-cafile=/opt/etcd/ssl/ca.pem \ | |

| --etcd-certfile=/opt/etcd/ssl/server.pem \ | |

| --etcd-keyfile=/opt/etcd/ssl/server-key.pem" | |

| EOF |

参数说明:

| --logtostderr // 启用日志 | |

| ---v // 日志等级 | |

| --etcd-servers // etcd 集群地址 | |

| --bind-address // 监听地址 | |

| --secure-port // https 安全端口 | |

| --advertise-address // 集群通告地址 | |

| --allow-privileged // 启用授权 | |

| --service-cluster-ip-range // Service 虚拟 IP 地址段 | |

| --enable-admission-plugins // 准入控制模块 | |

| --authorization-mode // 认证授权,启用 RBAC 授权和节点自管理 | |

| --enable-bootstrap-token-auth // 启用 TLS bootstrap 功能,后面会讲到 | |

| --token-auth-file // token 文件 | |

| --service-node-port-range Service // Node 类型默认分配端口范围 |

systemd 管理 apiserver

| cat > /usr/lib/systemd/system/kube-apiserver.service << -'EOF' | |

| [Unit] | |

| Description=Kubernetes API Server | |

| Documentation=https://github.com/kubernetes/kubernetes | |

| [Service] | |

| EnvironmentFile=-/opt/kubernetes/cfg/kube-apiserver | |

| ExecStart=/opt/kubernetes/bin/kube-apiserver $KUBE_APISERVER_OPTS | |

| Restart=on-failure | |

| [Install] | |

| WantedBy=multi-user.target | |

| -EOF | |

| # 复制证书到指定的位置 | |

| cd /iba/master-ca/ | |

| cp server.pem server-key.pem ca.pem ca-key.pem /opt/kubernetes/ssl/ | |

| systemctl daemon-reload | |

| systemctl enable kube-apiserver | |

| systemctl start kube-apiserver | |

| systemctl status kube-apiserver |

部署 scheduler 组件

| # 创建 schduler 配置文件 | |

| cat > /opt/kubernetes/cfg/kube-scheduler << EOF | |

| KUBE_SCHEDULER_OPTS="--logtostderr=true --v=4 --master=127.0.0.1:8080 --leader-elect" | |

| EOF | |

| # systemd 管理 schduler 组件 | |

| cat > /usr/lib/systemd/system/kube-scheduler.service << -'EOF' | |

| [Unit] | |

| Description=Kubernetes Scheduler | |

| Documentation=https://github.com/kubernetes/kubernetes | |

| [Service] | |

| EnvironmentFile=-/opt/kubernetes/cfg/kube-scheduler | |

| ExecStart=/opt/kubernetes/bin/kube-scheduler $KUBE_SCHEDULER_OPTS | |

| Restart=on-failure | |

| [Install] | |

| WantedBy=multi-user.target | |

| -EOF | |

| # 启动 kube-scheduler | |

| systemctl daemon-reload | |

| systemctl enable kube-scheduler | |

| systemctl start kube-scheduler | |

| systemctl status kube-scheduler |

部署 controller-manager 组件

| # 创建 controller-manager 配置文件: | |

| cat > /opt/kubernetes/cfg/kube-controller-manager << EOF | |

| KUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=true --v=4 --master=127.0.0.1:8080 --leader-elect=true --address=127.0.0.1 --service-cluster-ip-range=10.0.0.0/24 --cluster-name=kubernetes --cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem --cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem --root-ca-file=/opt/kubernetes/ssl/ca.pem --service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem" | |

| EOF | |

| # systemd 管理 controller-manager 组件 | |

| cat > /usr/lib/systemd/system/kube-controller-manager.service << -'EOF' | |

| [Unit] | |

| Description=Kubernetes Controller Manager | |

| Documentation=https://github.com/kubernetes/kubernetes | |

| [Service] | |

| EnvironmentFile=-/opt/kubernetes/cfg/kube-controller-manager | |

| ExecStart=/opt/kubernetes/bin/kube-controller-manager $KUBE_CONTROLLER_MANAGER_OPTS | |

| Restart=on-failure | |

| [Install] | |

| WantedBy=multi-user.target | |

| -EOF | |

| # 启动 kube-scheduler | |

| systemctl daemon-reload | |

| systemctl enable kube-controller-manager | |

| systemctl start kube-controller-manager | |

| systemctl status kube-controller-manager |

检查当前集群组件状态

/opt/kubernetes/bin/kubectl get cs

在 master 上操作

| vi /etc/profile | |

| export PATH=/opt/kubernetes/bin:$PATH | |

| source /etc/profile |

将 kubelet-bootstrap 用户绑定到系统集群角色

| cd /opt/kubernetes/cfg | |

| kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper \ | |

| --user=kubelet-bootstrap |

创建 kubeconfig 文件

| # 创建 kubelet bootstrapping kubeconfig | |

| BOOTSTRAP_TOKEN=674c457d4dcf2eefe4920d7dbb6b0ddc | |

| KUBE_APISERVER="https://192.168.0.205:6443" | |

| # 设置集群参数 | |

| kubectl config set-cluster kubernetes --certificate-authority=/opt/kubernetes/ssl/ca.pem \ | |

| --embed-certs=true \ | |

| --server=${KUBE_APISERVER} \ | |

| --kubeconfig=bootstrap.kubeconfig | |

| # 设置客户端认证参数 | |

| kubectl config set-credentials kubelet-bootstrap --token=${BOOTSTRAP_TOKEN} \ | |

| --kubeconfig=bootstrap.kubeconfig | |

| # 设置上下文参数 | |

| kubectl config set-context default --cluster=kubernetes \ | |

| --user=kubelet-bootstrap \ | |

| --kubeconfig=bootstrap.kubeconfig | |

| # 设置默认上下文 | |

| kubectl config use-context default --kubeconfig=bootstrap.kubeconfig |

创建 kube-proxy kubeconfig 文件

| cp /iba/master-ca/kube-proxy.pem /opt/kubernetes/ssl/ | |

| cp /iba/master-ca/kube-proxy-key.pem /opt/kubernetes/ssl/ | |

| kubectl config set-cluster kubernetes --certificate-authority=/opt/kubernetes/ssl/ca.pem \ | |

| --embed-certs=true \ | |

| --server=${KUBE_APISERVER} \ | |

| --kubeconfig=kube-proxy.kubeconfig | |

| kubectl config set-credentials kube-proxy --client-certificate=/opt/kubernetes/ssl/kube-proxy.pem \ | |

| --client-key=/opt/kubernetes/ssl/kube-proxy-key.pem \ | |

| --embed-certs=true \ | |

| --kubeconfig=kube-proxy.kubeconfig | |

| kubectl config set-context default --cluster=kubernetes \ | |

| --user=kube-proxy \ | |

| --kubeconfig=kube-proxy.kubeconfig | |

| kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig | |

| # 将这两个文件拷贝到 Node 节点 /opt/kubernetes/cfg 目录下 | |

| bootstrap.kubeconfig kube-proxy.kubeconfig | |

| ansible node -m copy -a 'src=bootstrap.kubeconfig dest=/opt/kubernetes/cfg' | |

| ansible node -m copy -a 'src=kube-proxy.kubeconfig dest=/opt/kubernetes/cfg' |

部署 kubelet 组件

| cd /iba/tools/kubernetes/server/bin | |

| ansible node -m copy -a 'src=kubelet dest=/opt/kubernetes/bin' | |

| ansible node -m copy -a 'src=kube-proxy dest=/opt/kubernetes/bin' | |

| ansible node -m shell -a 'chmod +x /opt/kubernetes/bin/kubelet' | |

| ansible node -m shell -a 'chmod +x /opt/kubernetes/bin/kube-proxy' |

在 node1 上执行

| # 创建 kubelet 配置文件:cat > /opt/kubernetes/cfg/kubelet << EOF | |

| KUBELET_OPTS="--logtostderr=true --v=4 \ | |

| --hostname-override=192.168.0.206 \ | |

| --kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig \ | |

| --bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig \ | |

| --config=/opt/kubernetes/cfg/kubelet.config \ | |

| --cert-dir=/opt/kubernetes/ssl \ | |

| --pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0" | |

| EOF |

| 参数说明:--hostname-override // 在集群中显示的主机名 | |

| --kubeconfig // 指定 kubeconfig 文件位置,会自动生成 | |

| --bootstrap-kubeconfig // 指定刚才生成的 bootstrap.kubeconfig 文件 | |

| --cert-dir // 颁发证书存放位置 | |

| --pod-infra-container-image // 管理 Pod 网络的镜像 |

| # kubelet.config 配置文件如下 | |

| cat > /opt/kubernetes/cfg/kubelet.config << EOF | |

| kind: KubeletConfiguration | |

| apiVersion: kubelet.config.k8s.io/v1beta1 | |

| address: 192.168.0.206 | |

| port: 10250 | |

| readOnlyPort: 10255 | |

| cgroupDriver: cgroupfs | |

| clusterDNS: ["10.0.0.2"] | |

| clusterDomain: cluster.local. | |

| failSwapOn: false | |

| authentication: | |

| anonymous: | |

| enabled: true | |

| EOF |

systemd 管理 kubelet 组件

| cat > /usr/lib/systemd/system/kubelet.service << -'EOF' | |

| [Unit] | |

| Description=Kubernetes Kubelet | |

| After=docker.service | |

| Requires=docker.service | |

| [Service] | |

| EnvironmentFile=/opt/kubernetes/cfg/kubelet | |

| ExecStart=/opt/kubernetes/bin/kubelet $KUBELET_OPTS | |

| Restart=on-failure | |

| KillMode=process | |

| [Install] | |

| WantedBy=multi-user.target | |

| -EOF |

启动 kubelet

| chmod +x /opt/kubernetes/bin/kubelet | |

| systemctl daemon-reload | |

| systemctl enable kubelet | |

| systemctl start kubelet | |

| systemctl status kubelet | |

| # 把配置文件发送到 node2 | |

| scp /opt/kubernetes/cfg/kubelet root@192.168.0.207:/opt/kubernetes/cfg/ | |

| scp /opt/kubernetes/cfg/kubelet.config root@192.168.0.207:/opt/kubernetes/cfg/ | |

| scp /usr/lib/systemd/system/kubelet.service root@192.168.0.207:/usr/lib/systemd/system/ | |

| # 在 node2 上修改对应的 IP | |

| vi /opt/kubernetes/cfg/kubelet | |

| vi /opt/kubernetes/cfg/kubelet.config | |

| chmod +x /opt/kubernetes/bin/kubelet | |

| systemctl daemon-reload | |

| systemctl enable kubelet | |

| systemctl start kubelet | |

| systemctl status kubelet |

在 master 审批 Node 加入集群

| cd /opt/kubernetes/bin | |

| kubectl get csr | |

| kubectl certificate approve XXXXX | |

| kubectl get node |

部署 kube-proxy 组件

| # 在 node1 上执行 | |

| # 创建 kube-proxy 配置文件: | |

| cat > /opt/kubernetes/cfg/kube-proxy << EOF | |

| KUBE_PROXY_OPTS="--logtostderr=true --v=4 --hostname-override=192.168.0.206 --cluster-cidr=10.0.0.0/24 --proxy-mode=ipvs --kubeconfig=/opt/kubernetes/cfg/kube-proxy.kubeconfig" | |

| EOF | |

| # systemd 管理 kube-proxy 组件 | |

| cat > /usr/lib/systemd/system/kube-proxy.service << -'EOF' | |

| [Unit] | |

| Description=Kubernetes Proxy | |

| After=network.target | |

| [Service] | |

| EnvironmentFile=-/opt/kubernetes/cfg/kube-proxy | |

| ExecStart=/opt/kubernetes/bin/kube-proxy $KUBE_PROXY_OPTS | |

| Restart=on-failure | |

| [Install] | |

| WantedBy=multi-user.target | |

| -EOF | |

| chmod +x /opt/kubernetes/bin/kube-proxy | |

| systemctl daemon-reload | |

| systemctl enable kube-proxy | |

| systemctl start kube-proxy | |

| systemctl status kube-proxy | |

| # 把配置文件发送到 node2 | |

| scp /opt/kubernetes/cfg/kube-proxy root@192.168.0.207:/opt/kubernetes/cfg/ | |

| scp /usr/lib/systemd/system/kube-proxy.service root@192.168.0.207:/usr/lib/systemd/system/ | |

| # 在 node2 上修改到对应的 IP | |

| vi /opt/kubernetes/cfg/kube-proxy | |

| chmod +x /opt/kubernetes/bin/kube-proxy | |

| systemctl daemon-reload | |

| systemctl enable kube-proxy | |

| systemctl start kube-proxy | |

| systemctl status kube-proxy |

检查集群状态

| kubectl get node | |

| kubectl get cs |

注册登录阿里云容器仓库

因国内无法获得 google 的 pause-amd64 镜像,我这里使用阿里云的。这里需要阿里云的帐号和密码。

| 在 node 节点上执行 | |

| sudo docker login --username={帐号} registry.cn-hangzhou.aliyuncs.com |

运行测试实例

| # 在 master 上执行 | |

| kubectl run nginx --image=nginx --replicas=3 | |

| kubectl expose deployment nginx --port=88 --target-port=80 --type=NodePort |

获取 node 节点的 pod,svc 信息

| # 提升权限 | |

| kubectl create clusterrolebinding system:anonymous --clusterrole=cluster-admin --user=system:anonymous | |

| kubectl get pods -o wide | |

| kubectl get svc |

根据获得的端口,访问 nginx

: