共计 47607 个字符,预计需要花费 120 分钟才能阅读完成。

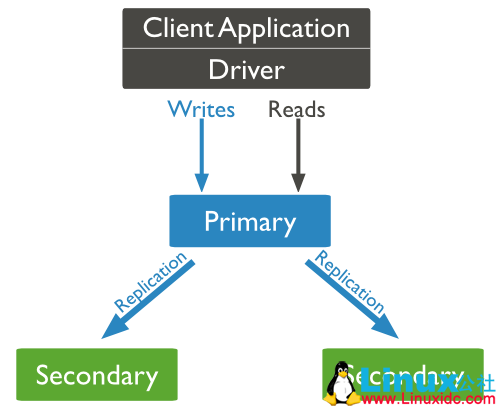

副本集是 MongoDB 提供的一种高可用解决方案。相对于原来的主从复制,副本集能自动感知 primary 节点的下线,并提升其中一个 Secondary 作为 Primary。

整个过程对业务透明,同时也大大降低了运维的成本。

架构图如下:

MongoDB 副本集的角色

1. Primary

默认情况下,读写都是在 Primary 上操作的。

2. Secondary

通过 oplog 来重放 Primary 上的所有操作,拥有 Primary 节点数据的完整拷贝。

默认情况下,不可写,也不可读。

根据不同的需求,Secondary 又可配置为如下形式:

1> Priority 0 Replica Set Members

优先级为 0 的节点,优先级为 0 的成员永远不会被选举为 primary。

在 mongoDB 副本集中,允许给不同的节点设置不同的优先级。

优先级的取值范围为 0 -1000,可设置为浮点数,默认为 1。

拥有最高优先级的成员会优先选举为 primary。

譬如,在副本集中添加了一个优先级为 2 的成员 node3:27020,而其它成员的优先级为 1,只要 node3:27020 拥有最新的数据,那么当前的 primary 就会自动降

级,node3:27020 将会被选举为新的 primary 节点,但如果 node3:27020 中的数据不够新,则当前 primary 节点保持不变,直到 node3:27020 的数据更新到最新。

2> Hidden Replica Set Members- 隐藏节点

隐藏节点的优先级同样为 0,同时对客户端不可见

使用 rs.status() 和 rs.config() 可以看到隐藏节点,但是对于 db.isMaster() 不可见。客户端连接到副本集时,会调用 db.isMaster() 命令来查看可用成员信息。所以,隐藏节

点不会受到客户端的读请求。

隐藏节点常用于执行特定的任务,譬如报表,备份。

3> Delayed Replica Set Members- 延迟节点

延迟节点会比 primary 节点延迟指定的时间(通过 slaveDelay 参数来指定)

延迟节点必须是隐藏节点。

3. Arbiter

仲裁节点,只是用来投票,且投票的权重只能为 1,不复制数据,也不能提升为 primary。

仲裁节点常用于节点数量是偶数的副本集中。

建议:通常将 Arbiter 部署在业务服务器上,切忌将其部署在 Primary 节点或 Secondary 节点服务器上。

注:一个副本集最多有 50 个成员节点,7 个投票节点。

MongoDB 副本集的搭建

创建数据目录

# mkdir -p /data/27017

# mkdir -p /data/27018

# mkdir -p /data/27019

为了便于查看运行过程中的日志信息,为每个实例创建单独的日志文件

# mkdir -p /var/log/mongodb/

启动 mongod 实例

# mongod –replSet myapp –dbpath /data/27017 –port 27017 –logpath /var/log/mongodb/27017.log –fork

# mongod –replSet myapp –dbpath /data/27018 –port 27018 –logpath /var/log/mongodb/27018.log –fork

# mongod –replSet myapp –dbpath /data/27019 –port 27019 –logpath /var/log/mongodb/27019.log –fork

以 27017 端口实例为例,其日志输出信息如下:

# mongo

初始化副本集

| > rs.initiate() | |

| {"info2" : "no configuration specified. Using a default configuration for the set", | |

| "me" : "node3:27017", | |

| "ok" : 1 | |

| } |

可通过 rs.conf() 查看当前副本集的配置信息,

| myapp:PRIMARY> rs.conf() | |

| {"_id" : "myapp", | |

| "version" : 1, | |

| "protocolVersion" : NumberLong(1), | |

| "members" : [ | |

| {"_id" : 0, | |

| "host" : "node3:27017", | |

| "arbiterOnly" : false, | |

| "buildIndexes" : true, | |

| "hidden" : false, | |

| "priority" : 1, | |

| "tags" : { }, | |

| "slaveDelay" : NumberLong(0), | |

| "votes" : 1 | |

| } | |

| ], | |

| "settings" : {"chainingAllowed" : true, | |

| "heartbeatIntervalMillis" : 2000, | |

| "heartbeatTimeoutSecs" : 10, | |

| "electionTimeoutMillis" : 10000, | |

| "catchUpTimeoutMillis" : 2000, | |

| "getLastErrorModes" : { }, | |

| "getLastErrorDefaults" : {"w" : 1, | |

| "wtimeout" : 0 | |

| }, | |

| "replicaSetId" : ObjectId("59082229517dd35bb9fd0d2a") | |

| } | |

| } |

chainingAllowed:是否允许级联复制

heartbeatIntervalMillis:心跳检测时间,默认是 2s

heartbeatTimeoutSecs:心跳检测失效时间,默认为 10s,即如果在 10s 内没有收到节点的心跳信息,则判断节点不可达(HostUnreachable),对 primary 和 Secondary 均适用。

日志输出信息如下:

# vim /var/log/mongodb/27017.log

添加节点

| myapp:PRIMARY> rs.add("node3:27018") | |

| {"ok" : 1 } |

27017 端口实例的日志信息如下:

27018 端口实例的日志信息如下:

| 2017-05-02T15:54:44.796+0800 I NETWORK [thread1] connection accepted from 192.168.244.30:46984 #2 (1 connection now open) | |

| 2017-05-02T15:54:44.922+0800 I - [conn2] end connection 192.168.244.30:46984 (1 connection now open) | |

| 2017-05-02T15:54:44.965+0800 I NETWORK [thread1] connection accepted from 192.168.244.30:46985 #3 (1 connection now open) | |

| 2017-05-02T15:54:44.978+0800 I NETWORK [conn3] received client metadata from 192.168.244.30:46985 conn3: {driver: { name: "NetworkInterfaceASIO-Replication", version: "3.4.2" }, os: {type: "Linux", name: "Red Hat Enterprise Linux Server release 6.7 (Santiago)", architecture: "x86_64", version: "Kernel 2.6.32-573.el6.x86_64" } } | |

| 2017-05-02T15:54:44.991+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Connecting to node3:27017 | |

| 2017-05-02T15:54:45.008+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Successfully connected to node3:27017 | |

| 2017-05-02T15:54:47.101+0800 I REPL [replExecDBWorker-2] Starting replication storage threads | |

| 2017-05-02T15:54:47.174+0800 I REPL [replication-0] Starting initial sync (attempt 1 of 10) | |

| 2017-05-02T15:54:47.174+0800 I REPL [ReplicationExecutor] New replica set config in use: {_id: "myapp", version: 2, protocolVersion: 1, members: [{ _id: 0, host: "node3:27017", arbiterOnly: false, buildIndexes: true, hidden: false, priority: 1.0, tags: {}, slaveDelay: 0, votes: 1 }, {_id: 1, host: "node3:27018", arbiterOnly: false, buildIndexes: true, hidden: false, priority: 1.0, tags: {}, slaveDelay: 0, votes: 1 } ], settings: {chainingAllowed: true, heartbeatIntervalMillis: 2000, heartbeatTimeoutSecs: 10, electionTimeoutMillis: 10000, catchUpTimeoutMillis: 2000, getLastErrorModes: {}, getLastErrorDefaults: { w: 1, wtimeout: 0 }, replicaSetId: ObjectId('59082229517dd35bb9fd0d2a') } } | |

| 2017-05-02T15:54:47.174+0800 I REPL [ReplicationExecutor] This node is node3:27018 in the config | |

| 2017-05-02T15:54:47.174+0800 I REPL [ReplicationExecutor] transition to STARTUP2 | |

| 2017-05-02T15:54:47.175+0800 I REPL [ReplicationExecutor] Member node3:27017 is now in state PRIMARY | |

| 2017-05-02T15:54:47.217+0800 I REPL [replication-0] sync source candidate: node3:27017 | |

| 2017-05-02T15:54:47.217+0800 I STORAGE [replication-0] dropAllDatabasesExceptLocal 1 | |

| 2017-05-02T15:54:47.217+0800 I REPL [replication-0] ****** | |

| 2017-05-02T15:54:47.217+0800 I REPL [replication-0] creating replication oplog of size: 990MB... | |

| 2017-05-02T15:54:47.232+0800 I STORAGE [replication-0] Starting WiredTigerRecordStoreThread local.oplog.rs | |

| 2017-05-02T15:54:47.232+0800 I STORAGE [replication-0] The size storer reports that the oplog contains 0 records totaling to 0 bytes | |

| 2017-05-02T15:54:47.232+0800 I STORAGE [replication-0] Scanning the oplog to determine where to place markers for truncation | |

| 2017-05-02T15:54:47.938+0800 I REPL [replication-0] ****** | |

| 2017-05-02T15:54:47.939+0800 I ASIO [NetworkInterfaceASIO-RS-0] Connecting to node3:27017 | |

| 2017-05-02T15:54:47.941+0800 I ASIO [NetworkInterfaceASIO-RS-0] Successfully connected to node3:27017 | |

| 2017-05-02T15:54:48.010+0800 I ASIO [NetworkInterfaceASIO-RS-0] Connecting to node3:27017 | |

| 2017-05-02T15:54:48.011+0800 I ASIO [NetworkInterfaceASIO-RS-0] Successfully connected to node3:27017 | |

| 2017-05-02T15:54:48.046+0800 I REPL [replication-0] CollectionCloner::start called, on ns:admin.system.version | |

| 2017-05-02T15:54:48.150+0800 I INDEX [InitialSyncInserters-admin.system.version0] build index on: admin.system.version properties: {v: 2, key: {version: 1 }, name: "incompatible_with_version_32", ns: "admin.system.version" } | |

| 2017-05-02T15:54:48.150+0800 I INDEX [InitialSyncInserters-admin.system.version0] building index using bulk method; build may temporarily use up to 500 megabytes of RAM | |

| 2017-05-02T15:54:48.154+0800 I INDEX [InitialSyncInserters-admin.system.version0] build index on: admin.system.version properties: {v: 1, key: {_id: 1 }, name: "_id_", ns: "admin.system.version" } | |

| 2017-05-02T15:54:48.155+0800 I INDEX [InitialSyncInserters-admin.system.version0] building index using bulk method; build may temporarily use up to 500 megabytes of RAM | |

| 2017-05-02T15:54:48.177+0800 I COMMAND [InitialSyncInserters-admin.system.version0] setting featureCompatibilityVersion to 3.4 | |

| 2017-05-02T15:54:48.221+0800 I REPL [replication-1] CollectionCloner::start called, on ns:test.blog | |

| 2017-05-02T15:54:48.264+0800 I INDEX [InitialSyncInserters-test.blog0] build index on: test.blog properties: {v: 2, key: {_id: 1 }, name: "_id_", ns: "test.blog" } | |

| 2017-05-02T15:54:48.264+0800 I INDEX [InitialSyncInserters-test.blog0] building index using bulk method; build may temporarily use up to 500 megabytes of RAM | |

| 2017-05-02T15:54:48.271+0800 I REPL [replication-1] No need to apply operations. (currently at { : Timestamp 1493711685000|1 }) | |

| 2017-05-02T15:54:48.271+0800 I REPL [replication-1] Finished fetching oplog during initial sync: CallbackCanceled: Callback canceled. Last fetched optime and hash: {ts: Timestamp 1493711685000|1, t: 1 }[7804552707376497349] | |

| 2017-05-02T15:54:48.271+0800 I REPL [replication-1] Initial sync attempt finishing up. | |

| 2017-05-02T15:54:48.271+0800 I REPL [replication-1] Initial Sync Attempt Statistics: {failedInitialSyncAttempts: 0, maxFailedInitialSyncAttempts: 10, initialSyncStart: new Date(1493711687173), initialSyncAttempts: [], fetchedMissingDocs: 0, appliedOps: 0, initialSyncOplogStart: Timestamp 1493711685000|1, initialSyncOplogEnd: Timestamp 1493711685000|1, databases: {databasesCloned: 2, admin: {collections: 1, clonedCollections: 1, start: new Date(1493711688037), end: new Date(1493711688220), elapsedMillis: 183, admin.system.version: {documentsToCopy: 1, documentsCopied: 1, indexes: 2, fetchedBatches: 1, start: new Date(1493711688046), end: new Date(1493711688220), elapsedMillis: 174 } }, test: {collections: 1, clonedCollections: 1, start: new Date(1493711688220), end: new Date(1493711688270), elapsedMillis: 50, test.blog: {documentsToCopy: 1, documentsCopied: 1, indexes: 1, fetchedBatches: 1, start: new Date(1493711688221), end: new Date(1493711688270), elapsedMillis: 49 } } } } | |

| 2017-05-02T15:54:48.352+0800 I REPL [replication-1] initial sync done; took 1s. | |

| 2017-05-02T15:54:48.352+0800 I REPL [replication-1] Starting replication fetcher thread | |

| 2017-05-02T15:54:48.352+0800 I REPL [replication-1] Starting replication applier thread | |

| 2017-05-02T15:54:48.352+0800 I REPL [replication-1] Starting replication reporter thread | |

| 2017-05-02T15:54:48.352+0800 I REPL [rsSync] transition to RECOVERING | |

| 2017-05-02T15:54:48.366+0800 I REPL [rsBackgroundSync] could not find member to sync from | |

| 2017-05-02T15:54:48.367+0800 I REPL [rsSync] transition to SECONDARY | |

| 2017-05-02T15:55:03.392+0800 I REPL [rsBackgroundSync] sync source candidate: node3:27017 | |

| 2017-05-02T15:55:03.396+0800 I ASIO [NetworkInterfaceASIO-RS-0] Connecting to node3:27017 | |

| 2017-05-02T15:55:03.404+0800 I ASIO [NetworkInterfaceASIO-RS-0] Successfully connected to node3:27017 |

添加仲裁节点

| myapp:PRIMARY> rs.addArb("node3:27019") | |

| {"ok" : 1 } |

27017 端口实例的日志信息如下:

| 2017-05-02T16:06:59.098+0800 I REPL [conn1] replSetReconfig admin command received from client | |

| 2017-05-02T16:06:59.116+0800 I REPL [conn1] replSetReconfig config object with 3 members parses ok | |

| 2017-05-02T16:06:59.116+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Connecting to node3:27019 | |

| 2017-05-02T16:06:59.123+0800 I REPL [ReplicationExecutor] New replica set config in use: {_id: "myapp", version: 3, protocolVersion: 1, members: [{ _id: 0, host: "node3:27017", arbiterOnly: false, buildIndexes: true, hidden: false, priority: 1.0, tags: {}, slaveDelay: 0, votes: 1 }, {_id: 1, host: "node3:27018", arbiterOnly: false, buildIndexes: true, hidden: false, priority: 1.0, tags: {}, slaveDelay: 0, votes: 1 }, {_id: 2, host: "node3:27019", arbiterOnly: true, buildIndexes: true, hidden: false, priority: 1.0, tags: {}, slaveDelay: 0, votes: 1 } ], settings: {chainingAllowed: true, heartbeatIntervalMillis: 2000, heartbeatTimeoutSecs: 10, electionTimeoutMillis: 10000, catchUpTimeoutMillis: 2000, getLastErrorModes: {}, getLastErrorDefaults: { w: 1, wtimeout: 0 }, replicaSetId: ObjectId('59082229517dd35bb9fd0d2a') } } | |

| 2017-05-02T16:06:59.123+0800 I REPL [ReplicationExecutor] This node is node3:27017 in the config | |

| 2017-05-02T16:06:59.124+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Connecting to node3:27019 | |

| 2017-05-02T16:06:59.124+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Successfully connected to node3:27019 | |

| 2017-05-02T16:06:59.125+0800 I NETWORK [thread1] connection accepted from 192.168.244.30:38300 #9 (6 connections now open) | |

| 2017-05-02T16:06:59.127+0800 I - [conn9] end connection 192.168.244.30:38300 (6 connections now open) | |

| 2017-05-02T16:06:59.131+0800 I REPL [ReplicationExecutor] Member node3:27019 is now in state STARTUP | |

| 2017-05-02T16:06:59.137+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Successfully connected to node3:27019 | |

| 2017-05-02T16:06:59.223+0800 I NETWORK [thread1] connection accepted from 192.168.244.30:38304 #10 (6 connections now open) | |

| 2017-05-02T16:06:59.225+0800 I NETWORK [conn10] received client metadata from 192.168.244.30:38304 conn10: {driver: { name: "NetworkInterfaceASIO-Replication", version: "3.4.2" }, os: {type: "Linux", name: "Red Hat Enterprise Linux Server release 6.7 (Santiago)", architecture: "x86_64", version: "Kernel 2.6.32-573.el6.x86_64" } } | |

| 2017-05-02T16:06:59.231+0800 I NETWORK [thread1] connection accepted from 192.168.244.30:38306 #11 (7 connections now open) | |

| 2017-05-02T16:06:59.232+0800 I - [conn11] end connection 192.168.244.30:38306 (7 connections now open) | |

| 2017-05-02T16:07:01.132+0800 I REPL [ReplicationExecutor] Member node3:27019 is now in state ARBITER |

27019 端口实例的日志信息如下:

| 2017-05-02T16:06:59.115+0800 I NETWORK [thread1] connection accepted from 192.168.244.30:33003 #1 (1 connection now open) | |

| 2017-05-02T16:06:59.117+0800 I - [conn1] end connection 192.168.244.30:33003 (1 connection now open) | |

| 2017-05-02T16:06:59.117+0800 I NETWORK [thread1] connection accepted from 192.168.244.30:33004 #2 (1 connection now open) | |

| 2017-05-02T16:06:59.122+0800 I NETWORK [conn2] received client metadata from 192.168.244.30:33004 conn2: {driver: { name: "NetworkInterfaceASIO-Replication", version: "3.4.2" }, os: {type: "Linux", name: "Red Hat Enterprise Linux Server release 6.7 (Santiago)", architecture: "x86_64", version: "Kernel 2.6.32-573.el6.x86_64" } } | |

| 2017-05-02T16:06:59.125+0800 I NETWORK [thread1] connection accepted from 192.168.244.30:33005 #3 (2 connections now open) | |

| 2017-05-02T16:06:59.127+0800 I NETWORK [thread1] connection accepted from 192.168.244.30:33007 #4 (3 connections now open) | |

| 2017-05-02T16:06:59.128+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Connecting to node3:27017 | |

| 2017-05-02T16:06:59.135+0800 I - [conn4] end connection 192.168.244.30:33007 (3 connections now open) | |

| 2017-05-02T16:06:59.136+0800 I NETWORK [conn3] received client metadata from 192.168.244.30:33005 conn3: {driver: { name: "NetworkInterfaceASIO-Replication", version: "3.4.2" }, os: {type: "Linux", name: "Red Hat Enterprise Linux Server release 6.7 (Santiago)", architecture: "x86_64", version: "Kernel 2.6.32-573.el6.x86_64" } } | |

| 2017-05-02T16:06:59.214+0800 I NETWORK [thread1] connection accepted from 192.168.244.30:33008 #5 (3 connections now open) | |

| 2017-05-02T16:06:59.216+0800 I NETWORK [conn5] received client metadata from 192.168.244.30:33008 conn5: {driver: { name: "NetworkInterfaceASIO-Replication", version: "3.4.2" }, os: {type: "Linux", name: "Red Hat Enterprise Linux Server release 6.7 (Santiago)", architecture: "x86_64", version: "Kernel 2.6.32-573.el6.x86_64" } } | |

| 2017-05-02T16:06:59.219+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Connecting to node3:27018 | |

| 2017-05-02T16:06:59.227+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Successfully connected to node3:27018 | |

| 2017-05-02T16:06:59.227+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Successfully connected to node3:27017 | |

| 2017-05-02T16:06:59.295+0800 I REPL [ReplicationExecutor] New replica set config in use: {_id: "myapp", version: 3, protocolVersion: 1, members: [{ _id: 0, host: "node3:27017", arbiterOnly: false, buildIndexes: true, hidden: false, priority: 1.0, tags: {}, slaveDelay: 0, votes: 1 }, {_id: 1, host: "node3:27018", arbiterOnly: false, buildIndexes: true, hidden: false, priority: 1.0, tags: {}, slaveDelay: 0, votes: 1 }, {_id: 2, host: "node3:27019", arbiterOnly: true, buildIndexes: true, hidden: false, priority: 1.0, tags: {}, slaveDelay: 0, votes: 1 } ], settings: {chainingAllowed: true, heartbeatIntervalMillis: 2000, heartbeatTimeoutSecs: 10, electionTimeoutMillis: 10000, catchUpTimeoutMillis: 2000, getLastErrorModes: {}, getLastErrorDefaults: { w: 1, wtimeout: 0 }, replicaSetId: ObjectId('59082229517dd35bb9fd0d2a') } } | |

| 2017-05-02T16:06:59.295+0800 I REPL [ReplicationExecutor] This node is node3:27019 in the config | |

| 2017-05-02T16:06:59.295+0800 I REPL [ReplicationExecutor] transition to ARBITER | |

| 2017-05-02T16:06:59.297+0800 I REPL [ReplicationExecutor] Member node3:27017 is now in state PRIMARY | |

| 2017-05-02T16:06:59.297+0800 I REPL [ReplicationExecutor] Member node3:27018 is now in state SECONDARY | |

| 2017-05-02T16:07:59.132+0800 I - [conn2] end connection 192.168.244.30:33004 (3 connections now open) |

检查复制集的状态

| myapp:PRIMARY> rs.status() | |

| {"set" : "myapp", | |

| "date" : ISODate("2017-05-02T08:10:59.174Z"), | |

| "myState" : 1, | |

| "term" : NumberLong(1), | |

| "heartbeatIntervalMillis" : NumberLong(2000), | |

| "optimes" : {"lastCommittedOpTime" : {"ts" : Timestamp(1493712649, 1), | |

| "t" : NumberLong(1) | |

| }, | |

| "appliedOpTime" : {"ts" : Timestamp(1493712649, 1), | |

| "t" : NumberLong(1) | |

| }, | |

| "durableOpTime" : {"ts" : Timestamp(1493712649, 1), | |

| "t" : NumberLong(1) | |

| } | |

| }, | |

| "members" : [ | |

| {"_id" : 0, | |

| "name" : "node3:27017", | |

| "health" : 1, | |

| "state" : 1, | |

| "stateStr" : "PRIMARY", | |

| "uptime" : 7537, | |

| "optime" : {"ts" : Timestamp(1493712649, 1), | |

| "t" : NumberLong(1) | |

| }, | |

| "optimeDate" : ISODate("2017-05-02T08:10:49Z"), | |

| "electionTime" : Timestamp(1493705257, 2), | |

| "electionDate" : ISODate("2017-05-02T06:07:37Z"), | |

| "configVersion" : 3, | |

| "self" : true | |

| }, | |

| {"_id" : 1, | |

| "name" : "node3:27018", | |

| "health" : 1, | |

| "state" : 2, | |

| "stateStr" : "SECONDARY", | |

| "uptime" : 974, | |

| "optime" : {"ts" : Timestamp(1493712649, 1), | |

| "t" : NumberLong(1) | |

| }, | |

| "optimeDurable" : {"ts" : Timestamp(1493712649, 1), | |

| "t" : NumberLong(1) | |

| }, | |

| "optimeDate" : ISODate("2017-05-02T08:10:49Z"), | |

| "optimeDurableDate" : ISODate("2017-05-02T08:10:49Z"), | |

| "lastHeartbeat" : ISODate("2017-05-02T08:10:57.606Z"), | |

| "lastHeartbeatRecv" : ISODate("2017-05-02T08:10:58.224Z"), | |

| "pingMs" : NumberLong(0), | |

| "syncingTo" : "node3:27017", | |

| "configVersion" : 3 | |

| }, | |

| {"_id" : 2, | |

| "name" : "node3:27019", | |

| "health" : 1, | |

| "state" : 7, | |

| "stateStr" : "ARBITER", | |

| "uptime" : 240, | |

| "lastHeartbeat" : ISODate("2017-05-02T08:10:57.607Z"), | |

| "lastHeartbeatRecv" : ISODate("2017-05-02T08:10:54.391Z"), | |

| "pingMs" : NumberLong(0), | |

| "configVersion" : 3 | |

| } | |

| ], | |

| "ok" : 1 | |

| } |

副本集也可通过配置文件的方式进行创建

| > cfg={... "_id":"myapp", | |

| "members":[... {"_id":0,"host":"node3:27017"}, | |

| {"_id":1,"host":"node3:27018"}, | |

| {"_id":2,"host":"node3:27019","arbiterOnly" : true} | |

| ]} | |

| > rs.initiate(cfg) |

验证副本集的可用性

在 primary 中创建一个集合,并插入一个文档进行测试

| # mongo | |

| myapp:PRIMARY> show dbs; | |

| admin 0.000GB | |

| local 0.000GB | |

| myapp:PRIMARY> use test | |

| switched to db test | |

| myapp:PRIMARY> db.blog.insert({"title":"My Blog Post"}) | |

| WriteResult({"nInserted" : 1 }) | |

| myapp:PRIMARY> db.blog.find(); | |

| {"_id" : ObjectId("59082731008c534e0763e90a"), "title" : "My Blog Post" } | |

| myapp:PRIMARY> quit() |

在 secondary 中进行验证

| # mongo --port 27018 | |

| myapp:SECONDARY> use test | |

| switched to db test | |

| myapp:SECONDARY> db.blog.find() | |

| Error: error: {"ok" : 0, | |

| "errmsg" : "not master and slaveOk=false", | |

| "code" : 13435, | |

| "codeName" : "NotMasterNoSlaveOk" | |

| } | |

| myapp:SECONDARY> rs.slaveOk() | |

| myapp:SECONDARY> db.blog.find() | |

| {"_id" : ObjectId("59082731008c534e0763e90a"), "title" : "My Blog Post" } | |

| myapp:SECONDARY> quit() |

因仲裁节点实际上并不存储任何数据,所以无法通过连接仲裁节点查看刚刚插入的文档

| # mongo --port 27019 | |

| myapp:ARBITER> use test | |

| switched to db test | |

| myapp:ARBITER> db.blog.find(); | |

| Error: error: {"ok" : 0, | |

| "errmsg" : "not master and slaveOk=false", | |

| "code" : 13435, | |

| "codeName" : "NotMasterNoSlaveOk" | |

| } | |

| myapp:ARBITER> rs.slaveOk() | |

| myapp:ARBITER> db.blog.find() | |

| Error: error: {"ok" : 0, | |

| "errmsg" : "node is not in primary or recovering state", | |

| "code" : 13436, | |

| "codeName" : "NotMasterOrSecondary" | |

| } | |

| myapp:ARBITER> quit() |

模拟 primary 宕掉后,副本集的自动切换

| # ps -ef |grep mongodb | |

| root 2619 1 1 13:59 ? 00:02:58 mongod --replSet myapp --dbpath /data/27018 --port 27018 --logpath /var/log/mongodb | |

| /27018.log --forkroot 2643 1 1 13:59 ? 00:02:38 mongod --replSet myapp --dbpath /data/27019 --port 27019 --logpath /var/log/mongodb | |

| /27019.log --forkroot 2739 1 1 14:05 ? 00:03:12 mongod --replSet myapp --dbpath /data/27017 --port 27017 --logpath /var/log/mongodb | |

| /27017.log --forkroot 3009 2037 0 16:08 pts/2 00:00:00 vim /var/log/mongodb/27017.log | |

| root 3055 2884 0 16:59 pts/5 00:00:00 tailf /var/log/mongodb/27019.log | |

| root 3071 2209 0 16:59 pts/3 00:00:00 tailf /var/log/mongodb/27018.log | |

| root 3097 1921 0 17:00 pts/0 00:00:00 grep mongodb | |

| # kill -9 2739 |

检查复制集的状态

在这里,连接 27018 端口实例

# mongo –port 27018

| myapp:PRIMARY> db.isMaster() | |

| {"hosts" : ["node3:27017", | |

| "node3:27018" | |

| ], | |

| "arbiters" : ["node3:27019" | |

| ], | |

| "setName" : "myapp", | |

| "setVersion" : 3, | |

| "ismaster" : true, | |

| "secondary" : false, | |

| "primary" : "node3:27018", | |

| "me" : "node3:27018", | |

| "electionId" : ObjectId("7fffffff0000000000000002"), | |

| "lastWrite" : {"opTime" : {"ts" : Timestamp(1493716742, 1), | |

| "t" : NumberLong(2) | |

| }, | |

| "lastWriteDate" : ISODate("2017-05-02T09:19:02Z") | |

| }, | |

| "maxBsonObjectSize" : 16777216, | |

| "maxMessageSizeBytes" : 48000000, | |

| "maxWriteBatchSize" : 1000, | |

| "localTime" : ISODate("2017-05-02T09:19:04.870Z"), | |

| "maxWireVersion" : 5, | |

| "minWireVersion" : 0, | |

| "readOnly" : false, | |

| "ok" : 1 | |

| } |

可见,primary 已经切换到 27018 端口实例上了。

对应的,27018 端口实例的日志输出信息如下:

| 2017-05-02T17:00:51.853+0800 I - [conn3] end connection 192.168.244.30:46985 (2 connections now open) | |

| 2017-05-02T17:00:51.853+0800 I REPL [replication-7] Restarting oplog query due to error: HostUnreachable: End of file. Last fetched optime (with hash): {ts: Timestamp 1493715649000|1, t: 1 }[-5996450771261812604]. Restarts remaining: 3 | |

| 2017-05-02T17:00:51.878+0800 I ASIO [replication-7] dropping unhealthy pooled connection to node3:27017 | |

| 2017-05-02T17:00:51.878+0800 I ASIO [replication-7] after drop, pool was empty, going to spawn some connections | |

| 2017-05-02T17:00:51.879+0800 I REPL [replication-7] Scheduled new oplog query Fetcher source: node3:27017 database: local query: {find: "oplog.rs", filter: {ts: { $gte: Timestamp 1493715649000|1 } }, tailable: true, oplogReplay: true, awaitData: true, maxTimeMS: 60000, term: 1 } query metadata: {$replData: 1, $ssm: {$secondaryOk: true } } active: 1 timeout: 10000ms shutting down?: 0 first: 1 firstCommandScheduler: RemoteCommandRetryScheduler request: RemoteCommand 12010 -- target:node3:27017 db:local cmd:{find: "oplog.rs", filter: {ts: { $gte: Timestamp 1493715649000|1 } }, tailable: true, oplogReplay: true, awaitData: true, maxTimeMS: 60000, term: 1 } active: 1 callbackHandle.valid: 1 callbackHandle.cancelled: 0 attempt: 1 retryPolicy: RetryPolicyImpl maxAttempts: 1 maxTimeMillis: -1ms | |

| 2017-05-02T17:00:51.879+0800 I ASIO [NetworkInterfaceASIO-RS-0] Connecting to node3:27017 | |

| 2017-05-02T17:00:51.879+0800 I ASIO [NetworkInterfaceASIO-RS-0] Failed to connect to node3:27017 - HostUnreachable: Connection refused | |

| 2017-05-02T17:00:51.880+0800 I REPL [replication-8] Restarting oplog query due to error: HostUnreachable: Connection refused. Last fetched optime (with hash): {ts: Timestamp 1493715649000|1, t: 1 }[-5996450771261812604]. Restarts remaining: 2 | |

| 2017-05-02T17:00:51.880+0800 I REPL [replication-8] Scheduled new oplog query Fetcher source: node3:27017 database: local query: {find: "oplog.rs", filter: {ts: { $gte: Timestamp 1493715649000|1 } }, tailable: true, oplogReplay: true, awaitData: true, maxTimeMS: 60000, term: 1 } query metadata: {$replData: 1, $ssm: {$secondaryOk: true } } active: 1 timeout: 10000ms shutting down?: 0 first: 1 firstCommandScheduler: RemoteCommandRetryScheduler request: RemoteCommand 12013 -- target:node3:27017 db:local cmd:{find: "oplog.rs", filter: {ts: { $gte: Timestamp 1493715649000|1 } }, tailable: true, oplogReplay: true, awaitData: true, maxTimeMS: 60000, term: 1 } active: 1 callbackHandle.valid: 1 callbackHandle.cancelled: 0 attempt: 1 retryPolicy: RetryPolicyImpl maxAttempts: 1 maxTimeMillis: -1ms | |

| 2017-05-02T17:00:51.880+0800 I ASIO [NetworkInterfaceASIO-RS-0] Connecting to node3:27017 | |

| 2017-05-02T17:00:51.880+0800 I ASIO [NetworkInterfaceASIO-RS-0] Failed to connect to node3:27017 - HostUnreachable: Connection refused | |

| 2017-05-02T17:00:51.880+0800 I REPL [replication-7] Restarting oplog query due to error: HostUnreachable: Connection refused. Last fetched optime (with hash): {ts: Timestamp 1493715649000|1, t: 1 }[-5996450771261812604]. Restarts remaining: 1 | |

| 2017-05-02T17:00:51.880+0800 I REPL [replication-7] Scheduled new oplog query Fetcher source: node3:27017 database: local query: {find: "oplog.rs", filter: {ts: { $gte: Timestamp 1493715649000|1 } }, tailable: true, oplogReplay: true, awaitData: true, maxTimeMS: 60000, term: 1 } query metadata: {$replData: 1, $ssm: {$secondaryOk: true } } active: 1 timeout: 10000ms shutting down?: 0 first: 1 firstCommandScheduler: RemoteCommandRetryScheduler request: RemoteCommand 12015 -- target:node3:27017 db:local cmd:{find: "oplog.rs", filter: {ts: { $gte: Timestamp 1493715649000|1 } }, tailable: true, oplogReplay: true, awaitData: true, maxTimeMS: 60000, term: 1 } active: 1 callbackHandle.valid: 1 callbackHandle.cancelled: 0 attempt: 1 retryPolicy: RetryPolicyImpl maxAttempts: 1 maxTimeMillis: -1ms | |

| 2017-05-02T17:00:51.880+0800 I ASIO [NetworkInterfaceASIO-RS-0] Connecting to node3:27017 | |

| 2017-05-02T17:00:51.883+0800 I ASIO [NetworkInterfaceASIO-RS-0] Failed to connect to node3:27017 - HostUnreachable: Connection refused | |

| 2017-05-02T17:00:51.884+0800 I REPL [replication-8] Error returned from oplog query (no more query restarts left): HostUnreachable: Connection refused | |

| 2017-05-02T17:00:51.884+0800 W REPL [rsBackgroundSync] Fetcher stopped querying remote oplog with error: HostUnreachable: Connection refused | |

| 2017-05-02T17:00:51.884+0800 I REPL [rsBackgroundSync] could not find member to sync from | |

| 2017-05-02T17:00:51.884+0800 I ASIO [ReplicationExecutor] dropping unhealthy pooled connection to node3:27017 | |

| 2017-05-02T17:00:51.884+0800 I ASIO [ReplicationExecutor] after drop, pool was empty, going to spawn some connections | |

| 2017-05-02T17:00:51.884+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Connecting to node3:27017 | |

| 2017-05-02T17:00:51.885+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Failed to connect to node3:27017 - HostUnreachable: Connection refused | |

| 2017-05-02T17:00:51.885+0800 I REPL [ReplicationExecutor] Error in heartbeat request to node3:27017; HostUnreachable: Connection refused | |

| 2017-05-02T17:00:51.885+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Connecting to node3:27017 | |

| 2017-05-02T17:00:51.885+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Failed to connect to node3:27017 - HostUnreachable: Connection refused | |

| 2017-05-02T17:00:51.885+0800 I REPL [ReplicationExecutor] Error in heartbeat request to node3:27017; HostUnreachable: Connection refused | |

| 2017-05-02T17:00:51.885+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Connecting to node3:27017 | |

| 2017-05-02T17:00:51.885+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Failed to connect to node3:27017 - HostUnreachable: Connection refused | |

| 2017-05-02T17:00:51.886+0800 I REPL [ReplicationExecutor] Error in heartbeat request to node3:27017; HostUnreachable: Connection refused | |

| 2017-05-02T17:00:54.837+0800 I REPL [SyncSourceFeedback] SyncSourceFeedback error sending update to node3:27017: InvalidSyncSource: Sync source was cleared. Was node3:27017 | |

| 2017-05-02T17:00:56.886+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Connecting to node3:27017 | |

| 2017-05-02T17:00:56.886+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Failed to connect to node3:27017 - HostUnreachable: Connection refused | |

| 2017-05-02T17:00:56.886+0800 I REPL [ReplicationExecutor] Error in heartbeat request to node3:27017; HostUnreachable: Connection refused | |

| 2017-05-02T17:00:56.886+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Connecting to node3:27017 | |

| 2017-05-02T17:00:56.887+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Failed to connect to node3:27017 - HostUnreachable: Connection refused | |

| 2017-05-02T17:00:56.887+0800 I REPL [ReplicationExecutor] Error in heartbeat request to node3:27017; HostUnreachable: Connection refused | |

| 2017-05-02T17:00:56.887+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Connecting to node3:27017 | |

| 2017-05-02T17:00:56.887+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Failed to connect to node3:27017 - HostUnreachable: Connection refused | |

| 2017-05-02T17:00:56.887+0800 I REPL [ReplicationExecutor] Error in heartbeat request to node3:27017; HostUnreachable: Connection refused | |

| 2017-05-02T17:01:01.560+0800 I REPL [ReplicationExecutor] Starting an election, since we've seen no PRIMARY in the past 10000ms | |

| 2017-05-02T17:01:01.605+0800 I REPL [ReplicationExecutor] conducting a dry run election to see if we could be elected | |

| 2017-05-02T17:01:01.616+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Connecting to node3:27017 | |

| 2017-05-02T17:01:01.626+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Failed to connect to node3:27017 - HostUnreachable: Connection refused | |

| 2017-05-02T17:01:01.630+0800 I REPL [ReplicationExecutor] VoteRequester(term 1 dry run) failed to receive response from node3:27017: HostUnreachable: Connection refused | |

| 2017-05-02T17:01:01.637+0800 I REPL [ReplicationExecutor] VoteRequester(term 1 dry run) received a yes vote from node3:27019; response message: {term: 1, voteGranted: true, reason: "", ok: 1.0 } | |

| 2017-05-02T17:01:01.638+0800 I REPL [ReplicationExecutor] dry election run succeeded, running for election | |

| 2017-05-02T17:01:01.670+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Connecting to node3:27017 | |

| 2017-05-02T17:01:01.670+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Failed to connect to node3:27017 - HostUnreachable: Connection refused | |

| 2017-05-02T17:01:01.672+0800 I REPL [ReplicationExecutor] VoteRequester(term 2) failed to receive response from node3:27017: HostUnreachable: Connection refused | |

| 2017-05-02T17:01:01.689+0800 I REPL [ReplicationExecutor] VoteRequester(term 2) received a yes vote from node3:27019; response message: {term: 2, voteGranted: true, reason: "", ok: 1.0 } | |

| 2017-05-02T17:01:01.689+0800 I REPL [ReplicationExecutor] election succeeded, assuming primary role in term 2 | |

| 2017-05-02T17:01:01.689+0800 I REPL [ReplicationExecutor] transition to PRIMARY | |

| 2017-05-02T17:01:01.691+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Connecting to node3:27017 | |

| 2017-05-02T17:01:01.692+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Connecting to node3:27017 | |

| 2017-05-02T17:01:01.692+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Connecting to node3:27019 | |

| 2017-05-02T17:01:01.693+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Failed to connect to node3:27017 - HostUnreachable: Connection refused | |

| 2017-05-02T17:01:01.693+0800 I REPL [ReplicationExecutor] My optime is most up-to-date, skipping catch-up and completing transition to primary. | |

| 2017-05-02T17:01:01.693+0800 I REPL [ReplicationExecutor] Error in heartbeat request to node3:27017; HostUnreachable: Connection refused | |

| 2017-05-02T17:01:01.693+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Failed to connect to node3:27017 - HostUnreachable: Connection refused | |

| 2017-05-02T17:01:01.693+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Connecting to node3:27017 | |

| 2017-05-02T17:01:01.694+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Failed to connect to node3:27017 - HostUnreachable: Connection refused | |

| 2017-05-02T17:01:01.694+0800 I REPL [ReplicationExecutor] Error in heartbeat request to node3:27017; HostUnreachable: Connection refused | |

| 2017-05-02T17:01:01.694+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Connecting to node3:27017 | |

| 2017-05-02T17:01:01.694+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Failed to connect to node3:27017 - HostUnreachable: Connection refused | |

| 2017-05-02T17:01:01.694+0800 I REPL [ReplicationExecutor] Error in heartbeat request to node3:27017; HostUnreachable: Connection refused | |

| 2017-05-02T17:01:01.694+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Successfully connected to node3:27019 | |

| 2017-05-02T17:01:02.094+0800 I REPL [rsSync] transition to primary complete; database writes are now permitted |

从日志输出中可以看出,

在第一次探测到 primary 不可用时,mongodb 会剔除掉不健康连接(dropping unhealthy pooled connection to node3:27017),然后继续探测,直到到达 10s(heartbeatTimeoutSecs)的限制,此时进行 primary 的自动切换。

| 2017-05-02T17:01:01.560+0800 I REPL [ReplicationExecutor] Starting an election, since we've seen no PRIMARY in the past 10000ms | |

| 2017-05-02T17:01:01.605+0800 I REPL [ReplicationExecutor] conducting a dry run election to see if we could be elected | |

| 2017-05-02T17:01:01.616+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Connecting to node3:27017 | |

| 2017-05-02T17:01:01.626+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Failed to connect to node3:27017 - HostUnreachable: Connection refused | |

| 2017-05-02T17:01:01.630+0800 I REPL [ReplicationExecutor] VoteRequester(term 1 dry run) failed to receive response from node3:27017: HostUnreachable: Connection refused | |

| 2017-05-02T17:01:01.637+0800 I REPL [ReplicationExecutor] VoteRequester(term 1 dry run) received a yes vote from node3:27019; response message: {term: 1, voteGranted: true, reason: "", ok: 1.0 } | |

| 2017-05-02T17:01:01.638+0800 I REPL [ReplicationExecutor] dry election run succeeded, running for election | |

| 2017-05-02T17:01:01.670+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Connecting to node3:27017 | |

| 2017-05-02T17:01:01.670+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Failed to connect to node3:27017 - HostUnreachable: Connection refused | |

| 2017-05-02T17:01:01.672+0800 I REPL [ReplicationExecutor] VoteRequester(term 2) failed to receive response from node3:27017: HostUnreachable: Connection refused | |

| 2017-05-02T17:01:01.689+0800 I REPL [ReplicationExecutor] VoteRequester(term 2) received a yes vote from node3:27019; response message: {term: 2, voteGranted: true, reason: "", ok: 1.0 } | |

| 2017-05-02T17:01:01.689+0800 I REPL [ReplicationExecutor] election succeeded, assuming primary role in term 2 | |

| 2017-05-02T17:01:01.689+0800 I REPL [ReplicationExecutor] transition to PRIMARY |

实际上,在 27017 端口实例宕掉的过程中,其它两个节点均会继续针对 27017 端口实例进行心跳检测

| 2017-05-02T17:46:08.384+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Connecting to node3:27017 | |

| 2017-05-02T17:46:08.384+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Failed to connect to node3:27017 - HostUnreachable: Conn | |

| ection refused2017-05-02T17:46:08.384+0800 I REPL [ReplicationExecutor] Error in heartbeat request to node3:27017; HostUnreachable: Connection | |

| refused |

当 27017 端口实例重新上线时,会自动以 Secondary 角色加入到副本集中

27017 端口实例启动并重新加入副本集的日志信息输出如下:

| 2017-05-02T17:46:10.616+0800 I CONTROL [initandlisten] MongoDB starting : pid=3141 port=27017 dbpath=/data/27017 64-bit host=node3 | |

| 2017-05-02T17:46:10.616+0800 I CONTROL [initandlisten] db version v3.4.2 | |

| 2017-05-02T17:46:10.616+0800 I CONTROL [initandlisten] git version: 3f76e40c105fc223b3e5aac3e20dcd026b83b38b | |

| 2017-05-02T17:46:10.616+0800 I CONTROL [initandlisten] OpenSSL version: OpenSSL 1.0.1e-fips 11 Feb 2013 | |

| 2017-05-02T17:46:10.616+0800 I CONTROL [initandlisten] allocator: tcmalloc | |

| 2017-05-02T17:46:10.616+0800 I CONTROL [initandlisten] modules: none | |

| 2017-05-02T17:46:10.616+0800 I CONTROL [initandlisten] build environment: | |

| 2017-05-02T17:46:10.616+0800 I CONTROL [initandlisten] distmod: rhel62 | |

| 2017-05-02T17:46:10.616+0800 I CONTROL [initandlisten] distarch: x86_64 | |

| 2017-05-02T17:46:10.616+0800 I CONTROL [initandlisten] target_arch: x86_64 | |

| 2017-05-02T17:46:10.616+0800 I CONTROL [initandlisten] options: {net: { port: 27017 }, processManagement: {fork: true }, replication: {replSet: "myapp" }, storage: {dbPath: "/data/27017" }, systemLog: {destination: "file", path: "/var/log/mongodb/27017.log" } } | |

| 2017-05-02T17:46:10.616+0800 W - [initandlisten] Detected unclean shutdown - /data/27017/mongod.lock is not empty. | |

| 2017-05-02T17:46:10.645+0800 I - [initandlisten] Detected data files in /data/27017 created by the 'wiredTiger' storage engine, so setting the active storage engine to 'wiredTiger'. | |

| 2017-05-02T17:46:10.645+0800 W STORAGE [initandlisten] Recovering data from the last clean checkpoint. | |

| 2017-05-02T17:46:10.645+0800 I STORAGE [initandlisten] | |

| 2017-05-02T17:46:10.645+0800 I STORAGE [initandlisten] ** WARNING: Using the XFS filesystem is strongly recommended with the WiredTiger storage engine | |

| 2017-05-02T17:46:10.645+0800 I STORAGE [initandlisten] ** See http://dochub.mongodb.org/core/prodnotes-filesystem | |

| 2017-05-02T17:46:10.645+0800 I STORAGE [initandlisten] wiredtiger_open config: create,cache_size=256M,session_max=20000,eviction=(threads_max=4),config_base=false,statistics=(fast),log=(enabled=true,archive=true,path=journal,compressor=snappy),file_manager=(close_idle_time=100000),checkpoint=(wait=60,log_size=2GB),statistics_log=(wait=0), | |

| 2017-05-02T17:46:11.402+0800 I STORAGE [initandlisten] Starting WiredTigerRecordStoreThread local.oplog.rs | |

| 2017-05-02T17:46:11.436+0800 I STORAGE [initandlisten] The size storer reports that the oplog contains 1040 records totaling to 99955 bytes | |

| 2017-05-02T17:46:11.436+0800 I STORAGE [initandlisten] Scanning the oplog to determine where to place markers for truncation | |

| 2017-05-02T17:46:11.502+0800 I CONTROL [initandlisten] | |

| 2017-05-02T17:46:11.502+0800 I CONTROL [initandlisten] ** WARNING: Access control is not enabled for the database. | |

| 2017-05-02T17:46:11.502+0800 I CONTROL [initandlisten] ** Read and write access to data and configuration is unrestricted. | |

| 2017-05-02T17:46:11.502+0800 I CONTROL [initandlisten] ** WARNING: You are running this process as the root user, which is not recommended. | |

| 2017-05-02T17:46:11.502+0800 I CONTROL [initandlisten] | |

| 2017-05-02T17:46:11.675+0800 I FTDC [initandlisten] Initializing full-time diagnostic data capture with directory '/data/27017/diagnostic.data' | |

| 2017-05-02T17:46:11.744+0800 I NETWORK [thread1] waiting for connections on port 27017 | |

| 2017-05-02T17:46:11.797+0800 I REPL [replExecDBWorker-2] New replica set config in use: {_id: "myapp", version: 3, protocolVersion: 1, members: [{ _id: 0, host: "node3:27017", arbiterOnly: false, buildIndexes: true, hidden: false, priority: 1.0, tags: {}, slaveDelay: 0, votes: 1 }, {_id: 1, host: "node3:27018", arbiterOnly: false, buildIndexes: true, hidden: false, priority: 1.0, tags: {}, slaveDelay: 0, votes: 1 }, {_id: 2, host: "node3:27019", arbiterOnly: true, buildIndexes: true, hidden: false, priority: 1.0, tags: {}, slaveDelay: 0, votes: 1 } ], settings: {chainingAllowed: true, heartbeatIntervalMillis: 2000, heartbeatTimeoutSecs: 10, electionTimeoutMillis: 10000, catchUpTimeoutMillis: 2000, getLastErrorModes: {}, getLastErrorDefaults: { w: 1, wtimeout: 0 }, replicaSetId: ObjectId('59082229517dd35bb9fd0d2a') } } | |

| 2017-05-02T17:46:11.797+0800 I REPL [replExecDBWorker-2] This node is node3:27017 in the config | |

| 2017-05-02T17:46:11.797+0800 I REPL [replExecDBWorker-2] transition to STARTUP2 | |

| 2017-05-02T17:46:11.797+0800 I REPL [replExecDBWorker-2] Starting replication storage threads | |

| 2017-05-02T17:46:11.798+0800 I REPL [replExecDBWorker-2] Starting replication fetcher thread | |

| 2017-05-02T17:46:11.798+0800 I REPL [replExecDBWorker-2] Starting replication applier thread | |

| 2017-05-02T17:46:11.798+0800 I REPL [replExecDBWorker-2] Starting replication reporter thread | |

| 2017-05-02T17:46:11.799+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Connecting to node3:27018 | |

| 2017-05-02T17:46:11.799+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Connecting to node3:27019 | |

| 2017-05-02T17:46:11.799+0800 I REPL [rsSync] transition to RECOVERING | |

| 2017-05-02T17:46:11.801+0800 I REPL [rsSync] transition to SECONDARY | |

| 2017-05-02T17:46:11.801+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Successfully connected to node3:27019 | |

| 2017-05-02T17:46:11.801+0800 I ASIO [NetworkInterfaceASIO-Replication-0] Successfully connected to node3:27018 | |

| 2017-05-02T17:46:11.802+0800 I REPL [ReplicationExecutor] Member node3:27019 is now in state ARBITER | |

| 2017-05-02T17:46:11.803+0800 I REPL [ReplicationExecutor] Member node3:27018 is now in state PRIMARY | |

| 2017-05-02T17:46:12.116+0800 I FTDC [ftdc] Unclean full-time diagnostic data capture shutdown detected, found interim file, some metrics may have been lost. OK | |

| 2017-05-02T17:46:12.388+0800 I NETWORK [thread1] connection accepted from 192.168.244.30:44011 #1 (1 connection now open) | |

| 2017-05-02T17:46:12.390+0800 I NETWORK [conn1] received client metadata from 192.168.244.30:44011 conn1: {driver: { name: "NetworkInterfaceASIO-Replication", version: "3.4.2" }, os: {type: "Linux", name: "Red Hat Enterprise Linux Server release 6.7 (Santiago)", architecture: "x86_64", version: "Kernel 2.6.32-573.el6.x86_64" } } | |

| 2017-05-02T17:46:15.744+0800 I NETWORK [thread1] connection accepted from 192.168.244.30:44012 #2 (2 connections now open) | |

| 2017-05-02T17:46:15.745+0800 I NETWORK [conn2] received client metadata from 192.168.244.30:44012 conn2: {driver: { name: "NetworkInterfaceASIO-Replication", version: "3.4.2" }, os: {type: "Linux", name: "Red Hat Enterprise Linux Server release 6.7 (Santiago)", architecture: "x86_64", version: "Kernel 2.6.32-573.el6.x86_64" } } | |

| 2017-05-02T17:46:17.802+0800 I REPL [rsBackgroundSync] sync source candidate: node3:27018 | |

| 2017-05-02T17:46:17.873+0800 I ASIO [NetworkInterfaceASIO-RS-0] Connecting to node3:27018 | |

| 2017-05-02T17:46:17.875+0800 I ASIO [NetworkInterfaceASIO-RS-0] Successfully connected to node3:27018 | |

| 2017-05-02T17:46:18.203+0800 I ASIO [NetworkInterfaceASIO-RS-0] Connecting to node3:27018 | |

| 2017-05-02T17:46:18.211+0800 I ASIO [NetworkInterfaceASIO-RS-0] Successfully connected to node3:27018 |

参考

1.《MongoDB 实战》

2.《MongoDB 权威指南》

3. 官方文档

本文永久更新链接地址 :http://www.linuxidc.com/Linux/2017-05/143434.htm